February 24, 2010

Grizzled Advice from Business & Legal Primer for Game Development

[Two years ago, I wrote up a few lessons for inclusion in Business & Legal Primer for Game Development. I’d always meant to cross-post it here and was surprised to see I hadn’t already when I went looking for it to share with the folks over at PlayNoEvil in reply to a recent post. – Randy]

Here are three top-line lessons for those considering designing their own MMORG or latest Facebook game for that matter…

1. Design Hubris Wastes Millions

Read all the papers/books/blogs written by your predecessors that you can – multi-user game designers are pretty chatty about their successes and failures. Pay close attention to their failures – try not to duplicate those. Believe it or not, several documented failures have been repeated over and over in multiple games, despite these freely available resources.

If you are going to ignore one of the lessons of those who went before, presumably because you think you know a better way, do it with your eyes wide open and be ready to change to plan B if your innovation doesn’t work out the way you expected. If you want to hash your idea out before committing it to code, consider consulting with the more experienced designers – they post on Terra Nova (http://blogs.terranova.com/) and talk to budding designers on the Mud-Dev (http://www.kanga.nu/) mailing list, amongst other places. Many of them respond pretty positively to direct contact via email – just be polite and ask your question clearly – after all, they are busy building their own worlds.

2. Beta Testers != Paying Customers

One recurring error in multi-user game testing is the problem of assuming that Beta users of a product will behave like real customers would. They don’t, for several reasons:

A. Beta testing is a status symbol amongst their peers

“I’m in the ZYXWorld Limited Beta!” is a bragging right. Since it has street-cred value, this leads the user to be on their best behavior. They will grief much less. They will share EULA breaking hacks with each other much less. They will harass much less. They won’t report duping bugs. The eBay aftermarket for goods won’t exist. In short, anything that would get them kicked out of the beta won’t happen anywhere near as often as when the product is released.

B. Beta testers aren’t paying.

Paying changes everything. During the Beta, the users work for you. When you release the game, you are working for them. Now some users will expect to be allowed to do all sorts of nasty things that they would never had done during the Beta. Those who were Beta users (and behaved then) will start to exploit bugs they found during the test period, but never reported. Bad beta users save up bugs, so they could use them after your product’s release to gain an edge over the new users, to dupe gold, or to just crash your server to show off to a friend.

So, you’re probably wondering; How do I get my Beta testers to show me what life on my service will really be like and to help me find the important bugs/exploits/crashes before I ship? Here are some strategies that worked for projects I worked on:

Crash Our World: Own up to the fact that Beta testers work for you and they do it for the status – incentivize the finding of crash/dup/exploit bugs that you want them to find. Give them a t-shirt for finding one. Put their portrait on the Beta Hall Of Fame page. Give them a rare in-world item that they can carry on into general release. Drop a monument in the world, listing the names of the testers that submitted the most heinous bugs. Turn it into a contest. Make it more valuable to report a bug than to keep it secret.

Pay-ta: Run a Paid Beta phase (after Crash Our World) to find out how users will interact with each other socially (or using your in-game social/communications features.) During this phase of testing you will get better results about which social features to prioritize/fix for release. Encourage and/or track the creation of fan communities, content databases, and add-ons – it will help you understand what to prepare for, as well as build word-of-mouth marketing. But, keep in mind that there is one thing you can never really test in advance: How your user community will socially scale. As the number of users grows, the type of user will diversify. For most games, the hard-core gamers come first and the casual players come later. Be sure to have a community manager whose job it is to track customer sentiment and understand the main player groups. How your community scales will challenge your development priorities and the choices you make will have you trading off new-customer acquisition vs. veteran player retention.

3. There Are No Game Secrets, Period

Thanks to the internet – in-game puzzles are solved for everyone at the speed of the fastest solver. Read about “The D’nalsi Island” adventure in Lucasfilm’s Habitat where the players consumed hundreds of development hours in only tens of minutes.

The Lesson? Don’t count on secrets to hold up for long. Instead, treat game walk-thru websites as a feature to be embraced instead of the bane of your existence. “But,” you’ll say, “I could create a version of my puzzle that is customized (randomized) for every user! That will slow them down!” Don’t bother; it will only upset your users.

The Tragedy of the Tapers

Consider the example of the per-player customized spell system in the original Asheron’s Call (by Turbine, Inc.): Each magic spell was designed to consume various types of several resources: scarabs, herbs, powders, potions, and colored tapers. The designers thought it would be great to have the users actually learn the spells by having to discover them through experimentation. The formula was different for every spell and the tapers were different for every user.

One can just hear the designer saying “That’ll fix those Internet spoilers! With this system, they each have to learn their own spells!” But, instead of feeling enjoyment, the players became frustrated with what seemed to be nothing other than a waste of their time and resources burning spell components as they were compelled to try the complete set of exponential combinations of tapers for no good reason.

What was interesting is that the users got frustrated enough to actually figure out the exact method of generating the random seed to determine the tapers for each user as follows:

Second Taper = (SEED * [ Talisman + (Herb + 3) + ((Powder + Potion) * 2) + (Scarab – 2) ] ) mod 12

[Modified from Jon Krueger’s web page on the subject.]

The players put this all into a client plug-in to remove the calculation overhead, and were now able to correctly formulate the spells the very first time they tried. Unfortunately, this meant that new users (who didn’t know about the plug-in) were likely to have a significantly poorer experience than veterans.

To Turbine’s credit, they revised the game in its second year to remove the need for most of the spell components and created rainbow tapers, which worked for all users in all spells, completely canceling the original per-player design.

Hundreds of thousands of dollars went into that spell system. The users made a large chunk of that effort obsolete very quickly, and Turbine then had to pay for more development and testing to undo their design.

Learn from Turbine’s mistake; Focus on making your game fun even if the player can look up all the answers in a database or a plug-in.

Don’t start a secrecy arms-war with your user. You’ll lose. Remember: There are more of them than you and collectively they have more time to work on your product than you do.

December 9, 2009

Creatures Of Habitat (@1up.com)

There is a loving historical tribute to the role that Lucasfilm’s Habitat played in the history of MMOs at 1up.com:

Creatures of Habitat

What modern day MMORPGs borrowed from Lucasfilm’s ahead-of-its time adventure — and what they still could learn from it.

By Scott Sharkey

After another year of massively multiplayer online game crib deaths, we can’t help but be reminded of the MMOG that started the whole thing back in 1985 — well over a decade before the genre even had a name. Lucasfilm Games’ Habitat remains an unaccountable anomaly in the history of videogames, a multiplayer online world from the days long before the advent of the World Wide Web. It’s the sort of historical oddity that stands out as dramatically as, say, the discovery of a fossilized dinosaur holding a machine gun: Incredible, but pretty damn cool.

…

Hell Is Other People

In addition to being perhaps the earliest example of a graphical MMO, Habitat was one of the first games to embrace the concept of emergent gameplay. Habitat’s designers threw a bunch of strange people into a huge space full of a whole lot of weird toys and items and just watched to see what would happen. It was a kitchen sink approach, in line with their philosophy that “[c]entral planning is impossible. Don’t even try.”

Of course, some of the things that happened were murder, theft, bug exploitation, and runaway currency inflation. The game’s designers advocated a hands-off approach to administrating the world, encouraging players to administer themselves, but they did intervene on occasion. The solutions to those problems (and the debate over whether they even were problems) were enlightening glimpses of the kinds of things that other designers would have to wrestle with decades down the road…

The two-page article is worth the read if you’d like a great short summary of what’s possible when no one tells you that chasing your dreams is a fools errand…

[Comments disabled on this post, please leave them at 1up.com.]

December 5, 2009

The Cake is a Lie: Reputation, Facebook Apps, and “Consent” User Interfaces

This is a cross-post from Randy’s other blog Building Web Reputation Systems and all comments should be directed there.

In early November, I attended the 9th meeting of the Internet Identity Workshop. One of the working sessions I attended was on Social Consent user interface design. After the session, I had an insight that reputation might play a pivotal role in solving one of the key challenges presented. I shared my detailed, yet simple, idea with Kevin Marks and he encouraged me to share my thoughts through a blog post—so here goes…

The Problem: Consent Dialogs

The technical requirements for the dialog are pretty simple: applications have to ask users for permission to access their sensitive personal data in order to produce the desired output—whether that’s to create an invitation list, or to draw a pretty graph, or to create a personalized high-score table including your friends, or to simply sign and attach an optional profile photo to a blog comment.

The problem, however, is this—users often don’t understand what they are being asked to provide, or the risks posed by granting access. It’s not uncommon for a trivial quiz application to request access to virtually the same amount of data as much more “heavyweight”applications (like, say, an app to migrate your data between social networks.) Explaining this to users—in any reasonable level of detail—just before running the application causes them to (perhaps rightfully) get spooked and abandon the permission grant.

Conflicting Interests

The platform providers want to make sure that their users are making as informed a decision as possible, and that unscrupulous applications don’t take advantage of their users.

The application developers want to keep the barriers to entry as low as possible. This fact creates a lot of pressure to (over)simplify the consent flow. One designer quipped that it reduces the user decision to a dialog with only two buttons: “Go” and “Go Away” (and no other text.)

The working group made no real progress. Kevin proposed creating categories, but that didn’t get anywhere because it just moved the problem onto user education—”What permissions does QuizApp grant again?”

Reputation to the Rescue?

All consent dialogs of this stripe suffer from the same problem: Users are asked to make a trust decision about an application that, by definition, they know nothing about!

This is where identity meets trust, and that’s the kind of problem that reputation is perfect for. Applications should have reputations in the platform’s database. That reputation can be displayed as part of the information provided when granting consent.

Here’s one proposed model (others are possible, this is offered as an exemplar).

The Cake is a Lie: Your Friends as Canaries in the Coal Mine of New Apps

First a formalism: when an application wants to access a user’s private Information (I), they have a set of intended Purposes (P) they wish to use it for. Therefore, the consent could be phrased thusly:

“If you let me have your (I), I will give you (P). [Grant] [Deny]”

Example: “If you give me access to your friends list, I will give you cake.”

In this system, I propose that the applications be compelled to declare this formulation as part of the consent API call. (P) would be stored along with the app’s record in the platform database. So far, this is only slightly different from what we have now, and of course, the application could omit or distort the request.

This is where the reputation comes in. Whenever a user uninstalls an application, the user is asked to provide a reason, including abusive use of data and specifically asks a question to see if the promise of (P) was kept.

“Did this application give you the [cake] it promised?”

All negative feedback is kept—to be re-used later when other new users install the app and encounter the consent dialog. If they have friends who have uninstalled this application already complaining that “If (I) then (P)” string was false, then the moral equivalent of this would appear scrawled in the consent box:

“Randy says the [cake] was unsatisfactory.

Bryce says the [cake] was unsatisfactory.

Pamela says the application spammed her friends list.”

Afterthoughts

Lots of improvements are possible (not limiting it to friends, and letting early-adopters know that they are canaries in the coal mine.) These are left for future discussion.

Sure, this doesn’t help early adopters.

But application reputation quickly shuts down apps that do obviously evil stuff.

Most importantly, it provides some insight to users by which they can make more informed consent decisions.

(And if you don’t get the cake reference, you obviously haven’t been playing Portal.)

October 19, 2009

Dirty Word Filters Fail (again.)

Elder Game: MMO game development – The Tragic Story of The Cussing NPCs

Champions NPC trips the dirty word filter.

October 16, 2009

Another take on “Smart people can rationalize anything”

Calvin Trillin, pontificating in the NYT about the late unpleasantness on Wall Street.

See Smart people can reationalize anything. Hmmm.

October 11, 2009

Crossing the streams

A lot of you who know me from the virtual worlds/online games/social media communities may not be aware that one of my other major avocations is photography. In particular, for the past several years I’ve been engaged in an extended project to photographically document the current state of the former Southern Pacific (now Union Pacific) Coast Line from San Francisco to the outskirts of Los Angeles. At this point I’ve accumulated something like 30,000 pictures, but until now they’ve just been piling up on my file server. However, a number of friends who have been following this particular adventure have begun nagging me to stop telling stories and produce some output already. Embracing the concept that Productive People Ship, I’ve started a photoblog, Unexpected Image, to show off this and my other photo work. If you’re the sort of person who likes this sort of thing, I hope this is the sort of thing you’ll like.

Off topic I know, but I justify posting about it here ’cause Randy is implicated in the story of how the photoblog got its name, which you can read about on the About page.

September 29, 2009

Fujitsu Habitat footage on YouTube

Tomoko Kojima (aka Oracle Layza) found some video of very early Fujitsu Habitat interaction (on the FM-Towns) on YouTube:

and

.

I’m most struck by the whiplash discrepancy between the music and the action in the first clip. That and the way the Internet continues to cough up nuggets of wonder from the distant past.

Thanks Tomoko!

September 25, 2009

The good news is, I don’t have to move to Seattle

From the shameless self-promotion department:

As some of you may know, I’ve spent most of the past year working as a contract CTO for Kirkland, WA based WeMade Entertainment USA, the (relatively) recently formed American arm of the Korean online games company WeMade Entertainment. The job involved overseeing the setting up of their engineering organization and architecting a community platform for their MMO offerings in the west. There were many cool things about this job, but one of the less cool things was that since it was 800 miles from home, it kept me racking up the frequent flyer miles and would have required me (and my family) to move to Seattle if it had gone on much longer. However that contract is over as of the end of September, and so I’m now once again a free agent.

For the moment I’m doing some work with Randy’s consulting group (and, I’ll admit, finding the consulting lifestyle congenial), but I’m definitely on the prowl for The Next Thing. If you know anybody looking for a virtual worlds guru, online games technology wizard, freelance code disciplinarian, or software development organization tamer, my resume is here. Pass the word.

September 7, 2009

Elko III: Scale Differently

Preface: This is the third of three posts on Elko, a server platform for sessionful, stateful web applications that I’m releasing this week as open source software. Earlier, Part I presented the business backstory for Elko. Part II, yesterday’s post, presented the technical backstory, laying out the key ideas that lead to the thing. Today’s post presents a more detailed technical explication of the system itself, with particular emphasis on the scaling model that enables it all to work effectively.

In Part II I ranted at length about some of the unfortunate consequences of the doctrine of statelessness, the predominant paradigm for scaling web applications. Keeping the short-term state of a client-server session in the server’s memory is easy and therefor tempting, but, the story goes, you shouldn’t do that because it means you can’t scale your application — you just can’t handle the traffic from thousands or millions of users on the single machine whose memory it would be.

But this isn’t so much a server capacity problem as it is a traffic routing problem. In a traditional web server farm, load is distributed across multiple servers by arranging for successive HTTP requests to a particular named host to be delivered to different servers. Typically this is accomplished through provision of multiple IP addresses in the DNS resolution of the host name or through special load balancing routers in the server datacenter that virtualize the nominal host IP address, directing successive TCP sessions to different machines on the datacenter’s internal network.

This technique has a number of virtues, not least of which is that it is relatively simple. It takes advantage of the expectation that the loads that successive HTTP requests are going to place on the servers are likely to be uncorellated, and thus delivering requests to servers on a simple round-robin schedule, or even randomly, will, through the statistical magic of large numbers, result in more or less even load distribution across the datacenter. This lack of correlation is usually a reasonable assumption, since the various browsers hitting a given site around the same time are, for most sites, uncoordinated (indeed, the deliberate coordination of such activity is the basis for a major class of denial of service attacks).

However, just as this scheme implies that a given browser has no control over (nor ability to predict) which server machine it’s actually going to be talking to when it sends an HTTP request, it similarly means that a given server has no say over which clients it will be servicing. Any service implementation that relies on local data coherence from one request to the next (other than of a statistical nature, as is exploited by caching) is thus doomed. Keeping session state in the server’s memory is right out.

Elko approaches the scaling problem in a different way. First of all, we embrace the concept of a session: a series of interactions between the client and the server that has a beginning, a middle, and an end. This is by no means an exotic abstraction; indeed, the TCP protocol that HTTP is layered on top of is sessionful in exactly this way. However, HTTP then takes the session abstraction away from us, leaving it to the web application framework (of which, in this sense, Elko is just one of many) to pile on a bunch of additional mechanism to put it back in again.

Whereas, from the client’s perspective, a TCP session represents a communications connection to a particular host on the network, an Elko session represents a communications connection to a particular context. Like a web page, a context has a distinct, addressable identity. Unlike a web page, a context has its own computational existence independent of who is communicating with it at any given moment. In particular, multiple clients can interact with a given context at the same time, and the context itself can act independent of any of its individual clients, including when there are no clients at all. For example, in a multi-user chat application, the contexts would most likely be chat rooms. In a real-time auction application, contexts might represent the various auctions that are going on.

The Elko platform provides several different types of servers, all based on a common set of building blocks. However, for purposes of the present discussion, there are two that matter: the Context Server and the Director.

A Context Server provides an environment in which contexts run. Context Servers are generic and fungible in the same kinds of ways that web servers are: need more capacity? Just add more servers. The difference in the scaling story is that rather than handling load by farming out HTTP requests amongst multiple web servers, the Elko approach is to farm out contexts amongst multiple Context Servers.

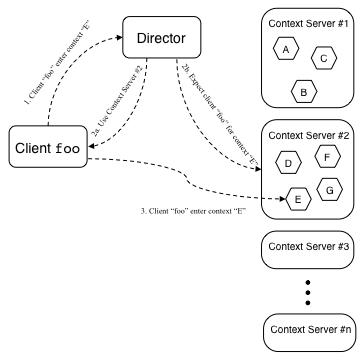

In Elko, a context can be said to be active or inactive. An inactive context is saved in persistent storage, such as a file or a database. An active context exists in the process and memory space of some Context Server. The job of the Director is to keep track of which contexts are active and, when active, which Context Server each one is running on. When a client wishes to enter a particular context (that is, initiate a communications connection to it), the client sends a request to a Director asking where to go (these requests are routed to Directors using the kinds of standard web scaling techniques described above). If the context is active, the Director replies to the client with the address of the Context Server upon which the context is running (and notifies the Context Server to expect the client’s arrival), rather like this:

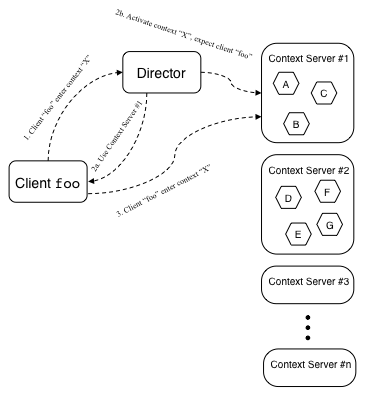

If the context is not active, the Director picks a Context Server to run the context, replies to the client with the address of this Context Server, and sends the chosen Context Server a message commanding it to activate the context, like this:

(Note that there is a race between the client arriving at the Context Server and the Context Server loading the context, but the implementation ensures that this is taken care of.)

Unlike the members of a cluster of traditional web servers, the address of each Context Server is fixed. Thus, once the client connection to a particular Context’s Server is made, the client communicates with the same Context Server for all of its interaction needs in that context for as long as the session lasts. This means the Context Server can keep the context state in memory, only going to persistent storage as needed for checkpointing long-term application state. Once the last client exits a context, that context can be unloaded and the server capacity made available for other contexts.

The Context Servers keep the Directors aprised of the contexts they are handling, the clients that are in those contexts, and the server load they are currently experiencing. From this information, the Directors can route client traffic by context or by user (e.g., in a chat application, I may want to enter the chat room where my friends are, rather than a specific room whose identity I know a priori), and can identify the least heavily loaded servers for new context activation.

Directors can be replicated for scale and redundancy, but since they actually do very little work, one Director can handle the load for a large number of clients before capacity becomes an issue. Director scalability is also enhanced because servicing clients only makes reference to in-memory data structures, so everything the Director does is very fast and has quick turnaround.

This scheme scales very well. Because it has a very light footprint and services nearly everything from memory, even a single Context Server can manage a substantial load. We benchmarked the SAF Context Server, which had the identical architecture, in 2002 at Sun’s performance testing center in Menlo Park. On a Sun Enterprise 450 server (2 processor 400Mhz SPARC, a mid- to low-range machine even then), we ran a simulated chat environment, running 8000 concurrent connections spread over ~200 chat rooms, with an average fanout per room of ~40 users, with each client producing an utterances approximately every 30 seconds (in a 40 user chat room, that level of activity is positively frantic). This resulted in about 20% CPU load with no user detectable lag. Ironically, the biggest challenge in performing this test was generating enough load. We ended up having to use several of the biggest machines they had in the lab to run the client side of the test. Note also that this test was conducted three or four generations of server hardware ago. I expect that on modern machines, these numbers will be even more substantial.

One potential criticism of this scaling strategy is that it is more complicated than the way web servers usually do things. On the surface, I have to concede that that is true. However, by the time you take into consideration the extra work you need to do in an actual large-scale web setup, configuring routers and load balancers and memcache servers and database clusters and endless other complications, plus all the extra application engineering work to make use of these, I think Elko ends up being a simpler configuration. I know from experience that it’s a vastly simpler environment for the application coder.

So that’s the theorical side of the scaling story. I invite anyone who has an interest in delving deeper to check things out for themselves. The code is here.

September 6, 2009

Elko II: Against Statelessness (or, Everything Old Is New Again)

Preface: This is second of three posts on Elko, a server platform for sessionful, stateful web applications that I’m releasing this week as open source software. Part I, posted yesterday, presented the business backstory for Elko. This post presents the technical backstory: it lays out the key ideas that lead to the thing. Part III which will be posted tomorrow, presents a more detailed technical explication of the system itself.

It seems to be an article of faith in the web hosting and web server development communities that one of the most expensive resources that gets used up on a web server is open TCP connections. Consequently, a modern web server goes to great lengths to try to close any open TCP connection it can as soon as possible. Symptoms of this syndrome include short timeouts on HTTP Keep-Alive sessions (typically on the order of 10 seconds) and connection pool size limits on reverse proxies, gateways, and the like (indeed, a number of strange limits of various kinds seem to appear nearly any time you see the word “pool” used in any server related jargon). These guys really, really, really want to close that connection.

In the world as I see it, the most expensive thing is not an open connection per se. The cost of an open but inactive TCP connection is trivial: state data structures measured in the tens or hundreds of bytes, and buffer space measured in perhaps tens of kilobytes. Keeping hundreds of thousands of simultaneous inactive connections open on a single server (i.e., vastly more connections than the server would be able to service if they were all active) is really not that big a deal.

The expense I care about is the client latency associated with opening a new TCP connection. Over IP networks, just about the most expensive operation there is is opening a new TCP connection. In my more cynical moments, I imagine web guys thinking that since it is expensive, it must be valuable, so if we strive to do it as frequently as possible, we must be giving the users a lot of value, hence HTTP. However, the notable thing about this cost is that it is borne by the user, who pays it by sitting there waiting, whereas the cost of ongoing open connections is paid by the server owner.

So why do we have this IHMO upside down set of valuation memes driving the infrastructure of the net?

The answer, in part, lies in the architecture of a lot of server software, most notably Apache. Apache is not only the leading web server, it is arguably the template for many of its competitors and many of its symbionts. It is the 800 pound gorilla of web infrastructure.

Programming distributed systems is hard. Programming systems that do a lot of different things simultaneously is hard. Programming long-lived processes is hard. So a trick (and I should acknowledge up front that it’s a good trick) that Apache and its brethren use is the one-process-per-connection architecture (or, in some products, one-thread-per-connection). The idea is that you have a control process and a pool of worker processes. The control process designates one of the worker processes to listen for a new connection, while the others wait. When a new connection comes in, the worker process accepts the connection and notifies the control process, who hands off responsibility for listening to one of the other waiting processes from the pool (actually, often this handshake is handled by the OS itself rather than the control process per se, but the principle remains the same). The worker then goes about actually reading the HTTP request from the connection, processing it, sending the reply back to the client, and so on. When it’s done, it closes the connection and tells the control process to put it back into the pool of available worker processes, whence it gets recycled.

This is actually quite an elegant scheme. It kills several birds with one stone: the worker process doesn’t have to worry about coordinating with anything other than its sole client and the control process. The worker process can operate synchronously, which makes it much easier to program and to reason about (and thus to debug). If something goes horribly wrong and a particular HTTP request leads to something toxic, the worker process can crash without taking the rest of the world with it; the control process can easily spawn a new worker to replace it. And it need not even crash — it can simply exit prophylactically after processing a certain number of HTTP requests, thus mitigating problems due to slow storage leaks and cumulative data inconsistencies of various kinds. All this works because HTTP is a stateless RPC protocol: each HTTP request is a universe unto itself.

Given this model, it’s easy to see where the connections-are-expensive meme comes from: a TCP connection may be cheap, but a process certainly isn’t. If every live connection needs its own process to go with it, then a bunch of connections will eat up the server pretty quickly.

And, in the case of HTTP, the doctrine of statelessness is the key to scaling a web server farm. In such a world, it is frequently the case that successive HTTP requests have a high probability of being delivered to different servers anyway, and so the reasoning goes that although some TCP connects might be technically redundant, this will not make very much difference in the overall user experience. And some of the most obvious inefficiencies associated with loading a web page this way are addressed by persistent HTTP: when the browser knows in advance that it’s going to be fetching a bunch of resources all at once from a single host (such as all the images on a page), it can run all these requests through a single TCP session. This is a classic example of where optimization of a very common special case really pays off.

The problem with all this is that the user’s mental model of their relationship with a web site is often not stateless at all, and many web sites do a great deal of work in their presentation to encourage users to maintain a stateful view of things. So called “Web 2.0” applications only enhance this effect, first because they blur the distinction between a page load and an interaction with the web site, and second because their more responsive Ajax user interfaces make the interaction between the user and the site much more conversational, where each side has to actively participate to hold up their end of the dialog.

In order for a web server to act as a participant in a conversation, it needs to have some short-term memory to keep track of what it was just talking to the user about. So after having built up this enormous infrastructure predicated on a stateless world, we then have to go to great effort and inconvenience to put the state back in again.

Traditionally, web applications keep the state in one of four places: in a database on the backend, in browser cookies, in hidden form fields on the page, and in URLs. Each of these solutions have distinct limitations.

Cookies, hidden form fields, and URLs suffer from very limited storage capacity and from being in the hands of the user. Encryption can mitigate the latter problem but not eliminate it — you can ensure that the bits aren’t tampered with but you can’t ensure that they won’t be gratuitously lost. These three techniques all require a significant amount of defensive programming if they are to work safely and reliably in any but the most trivial applications.

Databases can avoid the security, capacity and reliability problems with the other three methods, but at the cost of reintroducing one of the key problems that motivated statelessness in the first place: the need for a single point of contact for the data. Since the universe is born anew with each HTTP request, the web server that receives the request must query the database each time to reconstruct its model of the session, only to discard it again a moment later when request processing is finished. In essence, the web server is using its connection to the database — often a network connection to another server external to itself — as its memory bus. The breathtaking overhead of this has lead to a vast repertoire of engineering tricks and a huge after-market for support products to optimize things, in the form of a bewildering profusion of caches, query accelerators, special low-latency networking technologies, database clusters, high-performance storage solutions, and a host of other specialty products that frequently are just bandaids for the fundamental inefficiencies of the architecture that is being patched. In particular, I’ve been struck by the cargo-cult-like regard that some developers seem to have for the products of companies like Oracle and Network Appliance, apparently believing these products to possess some magic scaling juju that somehow makes them immune to the fundamental underlying problems, rather than merely being intensely market-driven focal points for the relentless incremental optimization of special cases.

(Before people start jumping in here and angrily pointing out all the wonderful things that databases can do, please note that I’m not talking about the many ways that web sites use databases for the kinds of things databases are properly used for: query and long term storage of complexly structured large data sets. I’m talking about the use of a database to hold the session state of a relatively short-term user interaction.)

And all of these approaches still impose some strong limitations on the range of applications that are practical. In particular, applications that involve concurrent interaction among multiple users (a very simple example is multi-user chat) are quite awkward in a web framework, as are applications that involve autonomous processes running inside the backend (a very simple example of this might be an alarm clock). These things are by no means impossible, but they definitely require you to cut against the grain.

Since the range of things that the web does do well is still mind bogglingly huge, these limitations have not been widely seen as pain points. There are a few major applications that fundamentally just don’t work well in the web paradigm and have simply ignored it, most notably massively multiplayer online games like World of Warcraft, but these are exceptions for the most part. However, there is some selection bias at work here: because the web encourages one form of application and not another, the web is dominated by the form that it favors. This is not really a surprise. What does bother me is that the limitations of the web have been so internalized by the current generation of developers that I’m not sure they are even aware of them, thus applications that step outside the standard model are never even conceived of in the first place.

Just consider how long it has taken Ajax to get traction: “Web 2.0” was possible in the late 1990s, but few people then realized the potential that was latent in Javascript-enabled web browsers, and fewer still took the potential seriously (notably, among those who did is my long time collaborator and business associate, Doug Crockford, instigator of the JSON standard and now widely recognized, albeit somewhat retroactively, as a Primo Ajax Guru). That “Web 2.0” happened seven or eight years later than it might otherwise have is due almost entirely to widespread failure of imagination. Doug and I were founders of a company, State Software, that invented a form of Ajax in all but name in 2001, and then crashed and burned in 2002 due, in large part, to complete inability to get anybody interested (once again, You Can’t Tell People Anything).

Back in The Olden Days (i.e., to me, seems like yesterday, and, to many of my coworkers, before the dawn of time), the canonical networked server application was a single-threaded Unix program driven by an event loop sitting on top of a call to select(), listening for new connections on a server socket and listening for data I/O traffic on all the other open sockets. And that’s pretty much how it’s still done, even in the Apache architecture I described earlier, except that the population of developers has grown astronomically in the mean time, and most of those newer developers are working inside web frameworks that hide this from you. It’s not that developers are less sophisticated today — though many of them are, and that’s a Good Thing because it means you can do more with less — but it means that the fraction of developers who understand what they’re building on top of has gone way down. I hesitate to put percentages on it, lacking actual quantitivate data, but my suspicion is that it’s gone from something like “most of them” to something like “very, very few of them”.

But it’s worth asking what would happen if you implemented the backend for a web application like an old-fashioned stateful server process, i.e., keep the client interacting over the same TCP connection for the duration of the session, and just go ahead and keep the short-term state of the session in memory. Well, from the application developer’s perspective, that would be just terribly, terribly convenient. And that’s the idea behind Elko, the server and application framework this series of posts is concerned with. (Which, as mentioned in Part I, I’m now unleashing on the world as open source software that you can get here).

Now the only problem with the aforementioned approach, really, is that it blows the whole standard web scaling story completely to hell — that and the fact that the browser and the rest of the web infrastructure will try to thwart you at every turn as they attempt to optimize that which you are not doing. But let’s say you could overcome those issues, let’s say you had tricks to overcome the browser’s quirks, and had an awesome scaling story that worked in this paradigm. Obviously I wouldn’t have been going on at length about this if I didn’t have a punchline in mind, right? That will be the substance of Part III tomorrow.