September 7, 2009

Elko III: Scale Differently

Preface: This is the third of three posts on Elko, a server platform for sessionful, stateful web applications that I’m releasing this week as open source software. Earlier, Part I presented the business backstory for Elko. Part II, yesterday’s post, presented the technical backstory, laying out the key ideas that lead to the thing. Today’s post presents a more detailed technical explication of the system itself, with particular emphasis on the scaling model that enables it all to work effectively.

In Part II I ranted at length about some of the unfortunate consequences of the doctrine of statelessness, the predominant paradigm for scaling web applications. Keeping the short-term state of a client-server session in the server’s memory is easy and therefor tempting, but, the story goes, you shouldn’t do that because it means you can’t scale your application — you just can’t handle the traffic from thousands or millions of users on the single machine whose memory it would be.

But this isn’t so much a server capacity problem as it is a traffic routing problem. In a traditional web server farm, load is distributed across multiple servers by arranging for successive HTTP requests to a particular named host to be delivered to different servers. Typically this is accomplished through provision of multiple IP addresses in the DNS resolution of the host name or through special load balancing routers in the server datacenter that virtualize the nominal host IP address, directing successive TCP sessions to different machines on the datacenter’s internal network.

This technique has a number of virtues, not least of which is that it is relatively simple. It takes advantage of the expectation that the loads that successive HTTP requests are going to place on the servers are likely to be uncorellated, and thus delivering requests to servers on a simple round-robin schedule, or even randomly, will, through the statistical magic of large numbers, result in more or less even load distribution across the datacenter. This lack of correlation is usually a reasonable assumption, since the various browsers hitting a given site around the same time are, for most sites, uncoordinated (indeed, the deliberate coordination of such activity is the basis for a major class of denial of service attacks).

However, just as this scheme implies that a given browser has no control over (nor ability to predict) which server machine it’s actually going to be talking to when it sends an HTTP request, it similarly means that a given server has no say over which clients it will be servicing. Any service implementation that relies on local data coherence from one request to the next (other than of a statistical nature, as is exploited by caching) is thus doomed. Keeping session state in the server’s memory is right out.

Elko approaches the scaling problem in a different way. First of all, we embrace the concept of a session: a series of interactions between the client and the server that has a beginning, a middle, and an end. This is by no means an exotic abstraction; indeed, the TCP protocol that HTTP is layered on top of is sessionful in exactly this way. However, HTTP then takes the session abstraction away from us, leaving it to the web application framework (of which, in this sense, Elko is just one of many) to pile on a bunch of additional mechanism to put it back in again.

Whereas, from the client’s perspective, a TCP session represents a communications connection to a particular host on the network, an Elko session represents a communications connection to a particular context. Like a web page, a context has a distinct, addressable identity. Unlike a web page, a context has its own computational existence independent of who is communicating with it at any given moment. In particular, multiple clients can interact with a given context at the same time, and the context itself can act independent of any of its individual clients, including when there are no clients at all. For example, in a multi-user chat application, the contexts would most likely be chat rooms. In a real-time auction application, contexts might represent the various auctions that are going on.

The Elko platform provides several different types of servers, all based on a common set of building blocks. However, for purposes of the present discussion, there are two that matter: the Context Server and the Director.

A Context Server provides an environment in which contexts run. Context Servers are generic and fungible in the same kinds of ways that web servers are: need more capacity? Just add more servers. The difference in the scaling story is that rather than handling load by farming out HTTP requests amongst multiple web servers, the Elko approach is to farm out contexts amongst multiple Context Servers.

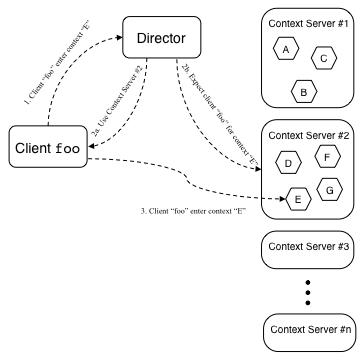

In Elko, a context can be said to be active or inactive. An inactive context is saved in persistent storage, such as a file or a database. An active context exists in the process and memory space of some Context Server. The job of the Director is to keep track of which contexts are active and, when active, which Context Server each one is running on. When a client wishes to enter a particular context (that is, initiate a communications connection to it), the client sends a request to a Director asking where to go (these requests are routed to Directors using the kinds of standard web scaling techniques described above). If the context is active, the Director replies to the client with the address of the Context Server upon which the context is running (and notifies the Context Server to expect the client’s arrival), rather like this:

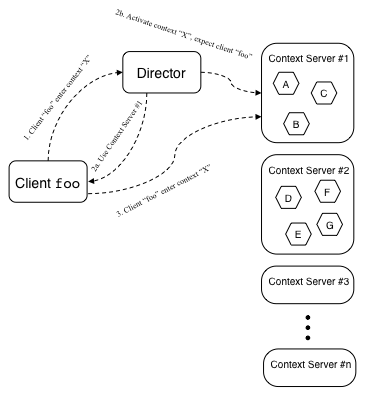

If the context is not active, the Director picks a Context Server to run the context, replies to the client with the address of this Context Server, and sends the chosen Context Server a message commanding it to activate the context, like this:

(Note that there is a race between the client arriving at the Context Server and the Context Server loading the context, but the implementation ensures that this is taken care of.)

Unlike the members of a cluster of traditional web servers, the address of each Context Server is fixed. Thus, once the client connection to a particular Context’s Server is made, the client communicates with the same Context Server for all of its interaction needs in that context for as long as the session lasts. This means the Context Server can keep the context state in memory, only going to persistent storage as needed for checkpointing long-term application state. Once the last client exits a context, that context can be unloaded and the server capacity made available for other contexts.

The Context Servers keep the Directors aprised of the contexts they are handling, the clients that are in those contexts, and the server load they are currently experiencing. From this information, the Directors can route client traffic by context or by user (e.g., in a chat application, I may want to enter the chat room where my friends are, rather than a specific room whose identity I know a priori), and can identify the least heavily loaded servers for new context activation.

Directors can be replicated for scale and redundancy, but since they actually do very little work, one Director can handle the load for a large number of clients before capacity becomes an issue. Director scalability is also enhanced because servicing clients only makes reference to in-memory data structures, so everything the Director does is very fast and has quick turnaround.

This scheme scales very well. Because it has a very light footprint and services nearly everything from memory, even a single Context Server can manage a substantial load. We benchmarked the SAF Context Server, which had the identical architecture, in 2002 at Sun’s performance testing center in Menlo Park. On a Sun Enterprise 450 server (2 processor 400Mhz SPARC, a mid- to low-range machine even then), we ran a simulated chat environment, running 8000 concurrent connections spread over ~200 chat rooms, with an average fanout per room of ~40 users, with each client producing an utterances approximately every 30 seconds (in a 40 user chat room, that level of activity is positively frantic). This resulted in about 20% CPU load with no user detectable lag. Ironically, the biggest challenge in performing this test was generating enough load. We ended up having to use several of the biggest machines they had in the lab to run the client side of the test. Note also that this test was conducted three or four generations of server hardware ago. I expect that on modern machines, these numbers will be even more substantial.

One potential criticism of this scaling strategy is that it is more complicated than the way web servers usually do things. On the surface, I have to concede that that is true. However, by the time you take into consideration the extra work you need to do in an actual large-scale web setup, configuring routers and load balancers and memcache servers and database clusters and endless other complications, plus all the extra application engineering work to make use of these, I think Elko ends up being a simpler configuration. I know from experience that it’s a vastly simpler environment for the application coder.

So that’s the theorical side of the scaling story. I invite anyone who has an interest in delving deeper to check things out for themselves. The code is here.

10 Comments

Trackbacks/Pingbacks

-

[…] client to a given server that maintains state in memory. In addition to Chip Morningstar’s post on Elko, see JSON Messaging Over HTTP that explains what happens at the HTTP level. The client […]

Chip,

in addition to scalability, the other argument often used for “traditional” web architectures is built-in redundancy and fault-tolerance. In the Elko architecture, how do you make the Director fault-tolerant?

Posted by: Chris Lauwers | September 10, 2009, 2:04 pm

Chris, I guess I implied the answer but I should have been clearer about that. You make the Director fault tolerant through simple redundancy — just set up multiple Directors. At State Software, our standard configuration was 2 Directors, and this was for fault tolerance rather than scaling. You can have as many Directors as you like, though more than 2 or 3 is probably overkill. Client traffic to the multiple Directors is then routed using rotating DNS or a VIP, just as you would with a regular web server cluster.

Posted by: Chip Morningstar | September 11, 2009, 9:19 am

Yes, I should have been more specific: my question has to do with replicating state between multiple Directors. Do you use a shared database for this?

Posted by: Chris Lauwers | September 11, 2009, 10:33 am

All the Directors’ data structures are non-persistent. When Context Servers come up they notify the Directors of their availability to provide services. They then keep the Directors informed of their ongoing availability and load status. In addition, there’s a protocol whereby the Directors keep each other updated about their own statuses, so that they can also go up and down, and so that new Directors can be added to the cluster dynamically.

There’s also a more complex configuration, not described in my post but covered in some of the docs in the release package (though I will confess that here things could benefit from more documentation love), where there’s an additional kind of server called a Broker that keeps track of a heterogeneous collection of Elko servers and the various services they offer to other servers in the farm. The Broker acts as a kind of configuration manager, so that each server only needs to know one point of contact to get wired up with everybody else.

Posted by: Chip | September 11, 2009, 2:48 pm

Maybe I am missing something completely (which is most likely), but what is the difference between this and a traditional web architectures with sticky session turned on ? When you say “client”, is that the browser client ? or both browser and server which are clients to this context framework ?

ps: The build up was great in the part II, but this part was a total let down (atleast for thick heads like me)

Posted by: Victor Doss | September 3, 2010, 12:35 pm

Victor, that’s a very reasonable question. There is some similarity, and to the extent that one’s application entails a single client’s interaction with it’s own autistic session state, they are basically the same thing. However, there are a couple of points of differentiation that matter to a broader range of applications. The most important is that multiple clients can be readily routed to a common session context containing shared state that is relevant to all of them. The canonical example is a real-time, multiplayer game, but you might also consider auctions, shared graphical workspaces, or live online slide presentations — anything where lively shared data that spans multiple client sessions would be of value. Another notable differentiator, less profound but still useful pragmatically, is the shift in perspective that arises when you regard the client-server connection as a channel to a process rather than to a purely reactive object. That’s a little abstract, so let me dig into it a bit more: a conventional web server is designed to act by responding to each HTTP request as it is delivered. Elko does that also, but since it decouples the HTTP request/response handshake from the application message protocol, it can also initiate communication to the client asynchronously. Timers and other processes can run autonomously on the server and then send messages to the client when they feel the need to do so. For example, an auction application might close bidding after the passage of sufficient time with no new bids submitted — and then notify everyone participating in the auction that this has happened. All of that can (and has been) done with a conventional web server also, but it cuts against the grain and requires jumping through a few hoops, whereas Elko does this natively.

Posted by: Chip | September 6, 2010, 9:40 pm

Thanks for the clarification. I am sold now. Actually I was sold when i read i have to use “make” to build it :-) Just wanted to make sure I am using for right application.

Posted by: Victor Doss | September 11, 2010, 10:11 am

How does this work in the context of an auto-scaling cluster of context servers, where servers can be brought online or taken off as load demands? There would need to be a mechanism for transferring live contexts from one server to another, e.g. when two lightly loaded servers are merged so that one can be taken offline, or when balancing load onto a newly-spawned server instance.

Posted by: Anson Mansfield | July 17, 2016, 4:30 pm

That’s a really interesting question. There are a couple of approaches, neither of which are in the current implementation but both of which have been in my things-that-might-be-worth-doing list for a while now.

The easy approach is a simple wait-for-quiescence strategy: In a lot of applications, contexts tend to be comparatively short lived. In this case, a server can be taken down in two steps: first, it notifies the Director that it is no longer offering service, then it waits for its current batch of contexts to become inactive. Once they’re all gone, it can exit cleanly. Obviously, this is problematic if contexts don’t eventually go away, either because they really are long lived or if somebody is just perversely hanging on for a long time. Some applications can tolerate loss of context state at the cost of some minor user inconvenience, and in such cases you can simply kick the recalcitrant users off (if they reconnect, they’ll reactivate the context on a different server and all will be well again, absent any loss of state that might have happened). Of course, if you can’t do that, you’ll have to go to the harder approach.

The hard approach is context migration. This is hard because it requires a general object serialization scheme. However, it is quite feasible (we did something like this at Electric Communities, and it worked fine). It’s feasible because of the turn based message execution model and because contexts generally have an isolated object graph. So you can stop the world between messages, serialize the context, ship it to another server, and redirect clients to the new server. The connection handoff logic is a bit hairy, and the serialization stuff is a lot of work, both of which are the reasons why this isn’t currently implemented yet (that, and because in practice we haven’t encountered use cases that demanded it, though that in turn may be due to the relatively light adoption of the Elko approach to things).

Note that adding capacity to a cluster is comparatively easy — just spin up more context servers. Since we do true load balancing (in contrast to traffic balancing, which is what most web solutions are doing when they claim to be load balancing), we’re in a position to simply not put more load on a given machine than it can handle, so it’s less imperative to spread existing load when adding new servers. Instead, adding new servers simply increases the number of contexts that can be activated.

Posted by: Chip | July 17, 2016, 6:39 pm