Posts filed under "History"

April 12, 2026

Recovering a Lost Document: Habitat Anecdotes (1988)

While digging through old CVS archives recently, I turned up something I hadn’t seen in decades: my very first paper, written in the fall of 1988 while I was still in the thick of running the Lucasfilm Habitat Beta and Pilot tests.

As best I can tell, this document has been effectively lost to the web. It was hosted on communities.com at some point in the mid-to-late 1990s, but that site is long gone and neither the Wayback Machine nor any search engine turns up a surviving copy. The version below was recovered from a CVS repository archive, where it had been imported in May 1998 and apparently never touched since.

I’ve preserved it exactly as written, including the 1993 preface I added when Electric Communities was getting started. The dead links to communities.com throughout the document are artifacts of their time — that domain is gone, but the paper it once pointed to, The Lessons of Lucasfilm’s Habitat, remains available through other channels.

Reading it now, I’m struck by how much of what we were figuring out in 1987–88 still holds. The five user types, the economic emergent behavior, the tension between operator power and community trust — these weren’t abstractions. They were things happening in front of us, in real time, with real people paying real money. This paper is where I first tried to write them down.

Habitat Anecdotes

and other boastings by F. Randall Farmer

Fall 1988

Preface (Fall 1993)

This, my very first paper, documents my early observations of the Lucasfilm’s Habitat Beta and Pilot tests in 1987 and 1988. These observations served as raw material for several published papers that became the inspiration for the formation of Electric Communities, a cyberspace research company founded by Chip Morningstar, Douglas Crockford and myself in 1993. If you don’t know anything about Habitat, this paper won’t make much sense. The unfamiliar should first read The Lessons of Lucasfilm’s Habitat in Cyberspace: First Steps from MIT Press.

This paper is adapted from a hypertext document.

The People

The entire point of Habitat is The People. It is an interactive environment where people define the parameters of their experience. Chip likes to call it “A Social Crucible”: throw some people in a room with some fun toys, and see what happens. If a situation arises that requires modification, first let them try to sort it out –avoid changing the rules– and if they can’t, take their input on how to change things. From this, it is clear that to understand Habitat, we must first understand its users.

There are basically 5 types of people in the Habitat universe:

1) The Passive

Easily 50% of the number of users fall into this category, but they probably use only 20% of the connect time (rough estimates). They tend to “cross over” to Habitat only to read their mail, collect their 100t bonus, and read the weekly newspaper. They tend to show up for events ad-hoc and when the mood strikes. This is the most important area for development. Special events and activities need to target this “on for just a few minutes” group. This group must be lead by the hand to participate. They tend to want to “be entertained” with no effort, like watching TV. The trick here is to encourage active participation.

2) The Active

This group is the next largest, and made up the bulk of the paying user-hours. The active user participates in 2-5 hours of activities a week. They tend to log into Habitat right after connecting. They send out ESP messages to others on-line to find out what is going on. They ALWAYS have a copy of the latest paper (and gripe if it comes out late). This group’s biggest problem is overspending. They really like Habitat, and lose track of the time spent “out there”. The watch word here is “be thrifty”. (See Quests for more on this)

3) The Motivators

The real heroes of Habitat. The Motivators understand that Habitat is what they make of it. They set out to change it. They throw parties, start institutions, open businesses, run for office, start moral debates, become outlaws, and win contests. Motivators are worth their weight in gold. One motivator for every 50 Passive/Active users is wonderful. Nurture these people. (See Motivators & Caretakers at Work)

4) The Caretakers

Usually already employees. The Caretakers are “mature” Motivators. They tend to help the new users, control personal conflicts, record bugs, suggest improvements, run their own contests, officiate at functions, and in general keep things running smoothly. There are far fewer of these than Motivators. Out of a Pilot group of about 400, we had 3. What you want to do with a Caretaker is groom him for Geek Godhood. (See Motivators & Caretakers at work)

5) The Geek Gods (System Operators)

I was the first Oracle/Operator. (I talk about that experience in Geek Gods Revisited). The operator’s job is most important. It really is like being a Greek God from the ancient writings. The Oracle grants wishes and introduces new items/rules into the world. With one bold stroke of the keyboard, the operator can create/eliminate bank accounts, entire city blocks, or the family business. This is a difficult task as one must consider the repercussions of any “external” effects to the world. Think about this: Would you be mad at “God” if one day suddenly electricity didn’t work anymore? Habitat IS a world. As such, someone should run it that has experience in that area. I suggest at least 10 years experience in Fantasy Role Playing and 2 years on telecommunications networks (specifically ones with CHAT programs). A Geek God must understand both consistency in fictional worlds, and the people who inhabit it.

To optimize the Habitat funativity experience, the goal is to move the user from his/her present category to the next one up:Passive->Active->Motivator->Caretaker->Geek God.

Move everyone one role to the right, and you will have a successful, self maintaining system. (Read: you will make bags of money.)

Real Money

The Habitat Beta Test was actually a paying pilot-test. The testers would be paying $0.08 per minute to play and in this way we could see if Habitat was financially feasible. There were exceptions; about 25% of the testers would be QLink staff, who either had free accounts or were given a certain number of free hours. This distinction caused some difficulty in deciding if any Habitat activity was a success (see The Scheduled Events). We wanted to see if Habitat was fun enough for paying customers.

Read these (don’t forget to read between the lines):

A certain user posted this message (edited for brevity):

As of today I am quitting Habitat. It costs too much. I have been a Q-Link subscriber for 2 years. The first year I used only 2 plus hours. ($10) The next year I used only 5. ($25) But in the last month, while I was playing Habitat I spent $270!!! I can’t afford that. You need to make it cheaper.

$270 = 57 hours or over 100 times his previous peak usage!

We must have made it “too much fun!”

another user said:

I didn’t realize that I was going to want to play 50 hours/month!

Habitat (for some) was addictive. Because of this, there was a call for “Bulk Discounts” and various schemes were proposed by the users. None of them were implementable, and all of them would have resulted in significant losses. I fully expect the call to go out again when it is released.

Yet another spent over $1000 in one month in Habitat. At around $300 and $600 dollars, he was mailed a message suggesting he “check out his usage in the billing section”. If we could get 20 more of this type of “rich” user, we would be profitable!

Habitat Money

The Habitat official currency is the Token.

The Economic Model

You are “hatched” with 2000t, and every day you log in, you receive 100t. Money can be won in contests/quests. You can buy and sell objects using automated machinery. The Vendroid sells stuff. The Pawn Machines buy it back. Each Vendroid makes the purchased item out of thin air. That’s right, no production costs. This leads to an interesting problem of runaway inflation. We never got enough people in the system to understand this effect, but got a taste of in when “The Big-Money Scam” happened:

The Big-Money Scam

During the Alpha test, “The Big Doll-Crystal Ball Scam” took place. In order to make the automated economy interesting, we made Vendroids so that the could have any price for any item. This was so we could have local, specialized economies (i.e. a widget could cost a little less if you bought it at Jack’s Place instead of The Emporium). In two vending machines across town from each other were two items that were for sale for less than the pawn machine would buy them back for: Dolls (for sale at 75t, hock at 100t) and Crystal Balls (for sale at 18000t, hock at 30000t). One weekend several persons participated in the Scam, they took their money, purchased many boxes, walked to the Doll Vendroid and bought as many as they could afford, walked back to town and pawned them. They repeated this process until they had enough to purchase Crystal Balls. This took many hours. The final result was at least 3 people with 100,000t – 500,000t. In one night the economy had been diluted as the T1 (the Token Supply) has jumped 5 times! (for more on this Scam, see They Cheated!).

What the Wealthy Did

The new rich class now began to distribute their wealth by having treasure hunts. There were other quests and hunts that gave many users fat bank accounts. Soon a true economy began to emerge: Heads. Since you can change heads in Habitat, and unique heads were often prizes or gifts from the oracle or very expensive, their value skyrocketed. This would definitely be true when thousands of users came along, as there are only 200 or so styles of heads, and each user is initially given a choice from about 30 of those. Heads are the only obvious form of customization an Avatar has.

The Issues

Introduction

As I have said before, Habitat is a society, and as such, has spawned many debates about how the Habitat world should be. Very few “rules” were imposed on the world from the start.

A theme at the core of many of the arguments is philosophical. Is an Avatar an extension of a human being, a Pac-man like critter — destined to die 1000 deaths — or something else. Our answer is all of the above and none. Again the people decide what is right. In reading about the issues, keep in mind that our sample was very small, and skewed towards Actives and Motivators.

Early Thieving

At first, during early testing, we found out that people were taking stuff out of others hands and shooting people in their own homes. We changed the system to allow thievery and gunplay only in non-city regions. (That one was easy! It gets more complicated from here)

Dial H for Murder

The hottest issue was, by far, murder. In Habitat, if an Avatar is “killed” he is teleported back home, with his pocket emptied, what he was holding dropped, his hit-points restored, and his head put in his hand. However, only what he had with him and his position in the universe has changed. One of the Motivators took to randomly shooting people roaming outside of town. A debate arose: Is Habi-Murder a crime? Should all weapons be banned? Is it all “just a game”? There was such a debate on the issue, that a vote was taken. We were surprised by the results. 50% said “A crime” and 50% said “no — it is part of the fun”. Our outlaw had in fact demonstrated that human-human interactive combat was fun for over half the audience. And since anyone who didn’t want to fight could just “ghost” and run away, there was no reason to consider the banning of weapons. (For more on personal combat, see Combat)

The Order of the Holy Walnut

One of the outstanding proponents of the anti-violence-in-Habitat view was also the first Habitat Minister. A Greek Orthodox Minister opened the first church in Habitat. His canons forbid his disciples to carry weapons, steal, or participate in violence of any kind. It was unfortunate that I had to eventually put a lock on the Church’s front door because every time he decorated (with flowers), someone would steal and pawn theme while he was not logged in!

Wedded Bliss?

Three Habitat weddings took place in that church. These were not human-human weddings, but Avatar-Avatar. Their turfs were joined so that they could cohabit. There were some technical problems with this that should be resolved in any new versions. Only one account could enter a turf if the owner were not home. We hadn’t properly handled cohabitation.

The first Habitat divorce occurred 2 weeks after the third wedding. I guess Habitat is a bit too close to the real world for my taste! The first habitat lawyers handled the divorce, including public postings all about town.

Entertaining the neighbors

The Party was one of my favorite activities. I liked to throw them at new Avatars’ houses. I would ESP a known “Passive” Avatar, and ask him where he lived. If he told me, I would send ESP to “Actives” and “Motivators” that were on-line teleport to the address. Great fun.

A close cousin to parties was the Sleep-Over. The users invented this on their own. Often private discussions would take place in a turf. It was considered a minor social honor to be invited to sleep-over. This meant to log-out while still in another’s turf. This was an honor because you would be able to log in later even if the host was not on. This would leave the host’s belongings open to plunder.

More on Stealing

Speaking of plunder… Stealing is still possible, even within city limits, as once an item is placed on the ground, it has no owner. Like murder, opinions on this issue are deeply divided and we think the best way to resolve it is to let (help) the players devise a limiting mechanism.

Secret Identities

In the original proposal, all Avatars would be able to have unique names (separate from their log-in names) and they could say they were anybody they wanted. Like a big costume party, no one would know who was who. I lost the battle for unique names, and QuantumLink wanted an “identify” function. It seemed the anonymity I wanted was lost. But I suggested a counter-proposal. A tit-for-tat rule. If you “peek” at someone else’s secret identity, you will be unmasked to that Avatar, and no one else would know the results. Some very interesting dynamics developed. Some people were offended if they were ID’ed right away. And others never bothered, if you said “HI! I’m WINGO”. I remember one time that I convinced someone that I was another person by sending ESP as “myself” to the person in the same region.

Business

The economy was a minor issue. Most everybody had plenty of tokens (except the Passives). In an attempt to open the retail business to Avatars, a Drug store was opened, with a locked room in back that only the owner could enter that contained the only vendroid that sold Habitat healing potions and poisons. The shopkeeper would pay the fixed price, and could charge whatever he wanted for resale. It was a success except for the fact that the owner logged in at strange hours.

To Govern or Not to Govern

Our design directive was not to interfere in Habitat politics or set up a government or law establishment. Many people thought that crimes of killing and theft ought to be punished. We decided to hold sheriff elections. The favorite candidate was a friendly guy, but many didn’t know that this very same Avatar was the brains behind The Scam. There was a public debate in the Populopolis Meeting Hall with the 3 AvaCandidates making statements and fielding questions. I was among the ghosted attendees. I would pre-type some comment like “Vote for Foon!”, de-ghost quickly, press return to send my message, and become a ghost again. No one would have any time to tell who I was before I was gone. This was fun. During the Question and answer period I, before appearing, typed this question: “Please explain to us why we should vote for a sheriff who obtained his campaign fees rather -ah- UNUSUALLY?”. This started a real-life-like mud slinging fight. As it turns out, he won by a landslide anyway. Populopolis had a sheriff.

For weeks he was nothing but a figurehead. We were stumped about what powers to give him. Should we give him the right to shoot anyone anywhere? Give him a more powerful gun? A wand to >zap< people to jail? What about courts? Lawyers? Laws? Late in the test the answer struck me: ask the users! A “Committee for a Safer Habitat” sent out a mailer to everyone asking this question: “What should the sheriff be able to do?”. Then another election was held “What is a crime?” and “What should the sheriff be empowered to do?”. The results were unable to be acted on before the test ended. An interesting side effect of this was that it became apparent there are two basic camps: anarchy and government. It will be great to see what happens with thousands of users facing this decision. Habitat need not be set up with a “default” government (like reality).

Magic Inflation

Besides economic inflation, we also had Magic inflation. In the Dungeon of Death, the designer had a vending machine that sold magic wand that teleport to the oracle anyone you point them at for only 1000t. At this time magic wands worked forever. Soon everyone had one of these wands and people were zapping each other all over the place. Crime got really out of hand when criminals would travel as ghosts, wait for people to put their belongings down for a second, de-ghost – zap – and steal. I had always planned on implementing a limited “charges” feature but was to busy tracking down bugs. Soon it was clear it was time to act. “God” changed the rules, and limited magic. The issue became foremost in the discussion arena: Some people were using these rods for the “good” cause of rescuing people when they got lost. Many were outraged that the rules changed. Ask yourself this question: What would you have done? This is a tricky question, fundamental for the chief operator to understand.

Motivators & Caretakers at work

By far the Caretaker who had the greatest on his fellow users was the editor of the Habitat newspaper The Weekly Rant. This user tirelessly spent 20-40 hours a week (free account) composing a 20, 30, 40 or even 50 page tabloid with containing the latest news, events, rumors, and even fictional articles. This was no small feat, he had only the barbaric Habitat paper editor, and no other tools. After he had composed the pages of an issue, he would arrange them in several chests of drawers in The Rant office and send me mail. I would publish it by using a special host program that would bind them into a book object and distribute it to the news vendroids, check the copy by hand for errors, and deliver a copy to the office (in Habitat). This worked great, but took massive amounts of his personal time. I began to automate the process further just as Habitat operation changed hands. The new publisher didn’t publish on time, delayed getting the tools ready to speed up creation, made editorial changes (he wanted it to be shorter, less fiction), and didn’t hand-deliver a copy of the final product. The editor quit. Just like real life: Someone new runs the show and the sensitive leave. Again, these people are rare and should be handled carefully. The Rant will never be the same.

Duels

One of the wands we implemented caused the victim to perform the “jump” gesture, accompanied by a “Hah!” word balloon. It was fun for a while, mostly because you could really effect another Avatar, but it got old fast. Soon a game was developed completely by the users involving these wands: The Duel. The rules were simple: two combatants, two wands, one judge. When the judge says “go” the first to “hit” the other with the wand 3 times wins. Not as easy as it sounds, since the duelists are allowed to run around.

Tours

Another Caretaker was the number #1 all-time most-traveled Avatar. He also was the longest lived. When new people started logging in, he took them on guided tours of this strange new world. He made them feel like they had a “friend” in town.

Combat

“Conflict is the essence of drama”. We used this quote in the initial Habitat design document. Habitat (it was then named “Microcosm”) was to have personal combat in the forms of weapons. Most computer games had combat, and we were offering a chance for users to effect each other!

Here I will explain how it actually ended up working. There were ranged weapons and hand-to-hand. An Avatar is born with 255 hit points (the actual number is masked from the user, and a “general state of health” message gives the user some idea how bad off he is.) While holding the weapon, you select a target and DO (attack). There is a telecommunications delay that may effect the hit-or-miss result. Each successful attack does some small amount of damage (i.e. 20 points.). You are always informed when you are shot, as your Avatar is knocked onto his rump.

As you can see, it would take quite a few hits to “kill” a healthy Avatar. Not only that, but you can avoid being damaged if the attacker can’t “touch” you in 2 ways: 1) by turning into a ghost or 2) running around (not standing still). You use #2 when you are in a gunfight where you are shooting back. This seems to be a working dynamic. If you really, really are low on hit points, you travel the “wild” regions as a ghost. There are also devices that will restore your hit points. The real problem is communicating this to new users, who are often standing around in a region when a bandit comes along with a gun. The neophyte hears a “bang” and sees his Avatar knocked on his can. Instead of acting, he types a message like “What was that? Why am I sitting down?”. Meanwhile, the bandit cranks out another 12 bullets…. Dead beginner probably had all of his money and stuff in his pocket too! This problem should be corrected in the Avatar Handbook, explaining that guns are dangerous (something we thought people would assume on their own).

For more on special types of combat see Magic Inflation, Duels, and Dungeon of Death.

The Scheduled Events

The D’nalsi Island Adventure

The first treasure-hunt ever planned for Habitat was mine, the D’nalsi Island Adventure. I took me hours to design, weeks to build (including a 100-region island), and days to coordinate the actors involved. I had taken several guesses as to what how long it would take the players to perform each “segment” of the quest. The mission: recover the lost “Amulet of Salesh”. First: A trial, introducing the characters and the first clues. Second: Salesh hires the adventurers. Third: The players needed to figure out the “secret” teleport address. Fourth, they must find the door to the hidden cave, solve the riddle. Last: find the hidden crawlway and the buried chest containing the amulet. The prize was 25,000t.

The first part was in the form of a “dinner theater”-like play, set in the county courthouse. It was heavily attended. Since it was set up as an introduction, there was no appropriate “time” for the players to discover anything.

On the day that Salesh “hired” adventurers to find his amulet, he gave out copies of a map of the island. Hidden on this map was a word that was the teleport address to the island. After about 15 minutes of hiring, when about the tenth Avatar was hired, Salesh (me) received an ESP from one of the Motivators: He had discovered the teleport address. Darn! It seemed that the others had no idea where to start, so I sent ESP to all the players announcing that the teleport address had been discovered to be a word on the map.

Within 8 hours the treasure had been recovered by that person who had first discovered the island. This was so soon that almost half the adventurers (the novices) had not yet even discovered the teleport address! It was clear that there is a very wide range of “adventuring” skills in the Habitat audience, and various events need to be better targeted, and should include handicapping mechanisms so that those behind don’t get more and more behind.

The Dungeon of Death

This “combat oriented” dungeon was the brainchild of a Caretaker that had recently become a Q-Link in-house employee. It shows that experienced “insider” could design an successful event using his understanding gained through being a player first. (Note: I had nothing to do with this design, so it was my first event as a participant)

For weeks ads appeared in The Rant announcing that Duo of Dread, DEATH and THE SHADOW were challenging the adventurers to come to their lair. Soon, on the outskirts of town, a dungeon was discovered. Outside a sign read “Danger, enter at your own risk.”. Two operators were logged in as DEATH and SHADOW, armed with guns that could kill in 1 shot (instead of the usual 12). The dungeon had totally dark (light did not help), dead end (trapped), and duplicate regions. It was clear that any explorer had better be prepared to “die” several times before mastering the dungeon. The rewards were pretty good: 1,000t minimum and access to a vending machine that sold “teleport” wands (see Magic Inflation). I even got a chance to play DEATH for one night. It was a slaughter. Avatars were dropping like flies… but most of them had prepared by emptying their pockets. When I got to play DEATH, I found him in one of the “dead ends” with four other trapped Avatars. I de-ghosted and started shooting, but was shot twice myself and died. Shoot! The last operator had not healed damage from his last encounters! The worst part of this is that “when you die, what is in your hands is dropped”. Yep. Some normal Avatar now had the “elephant” gun that could kill in one shot. The most valuable weapon in Habitat. What should I do? I later found out that this was not the first time this happened, it happened to a Q-Link operator and they “forced” the Avatar to give it back. I did something else: As DEATH (never identifying my true self) I threatened to kill the new owner. She replied that she would never leave town, thus being safe. OK, I think, she’s smart. After about an hour we settle on a deal, 10,000t to buy the gun back. We meet at The Oracle in town, where it is safe and make the exchange. It was great. The entire “operations accident” was handled within the game universe with no “external” interference.

R&R weekend adventures

These were short (1-2 hours) quests where a user pressed one of ten magic buttons to receive a clue to find one of ten hidden keys to be used in one of ten hidden safes. This were the all-around best quests to run (there were 3 of them) because there were always 7-10 winners. The only problem here was the Time Zone problem: The event had to be scheduled so that as many people as possible could participate from the moment it started. Q-Link access started at 6pm local time. This meant that for the Californians to have a chance, the adventure would have to start at 9pm East coast time at the earliest.

The Money Tree

The Quest for the Money Tree is the first quest an Avatar learns about from reading his free Welcome Wagon version of The Rant placed in his Turf. There is a tree in a forest that will dispense 100t for every Avatar once. Everyone can feel like they have “found” the magic tree.

The Tome of Wealth and Fame

This was also one of the originally conceived of quests. A certain set of tablets contained the Tome of Wealth and Fame. If you found it, you were to hide it somewhere else. You would receive a reward based on how long it took another to find it. The problem with this was that the world was so large that it often took weeks for someone to find the tome. It wasn’t an active process because, if you tried, it would take days of on-line time to find.

The Long and Short of Quests

A trend became clear about quests in Habitat. The winners of the “long range” quests like The D’nalsi Island Adventure were almost always people with free accounts. The freebies would stay on for hours on end to gain wealth, things and status (See Habitat Money:”The Scam”). The paying customers could only come on 1-2 hours/week. The idea that people would be able to “work on” a quest for weeks is bogus. The long range quest must be something that either “everyone” can win or does not provide some significant advantage in the world. (See The Money Tree)

Grand Openings

A real surprise was the popularity of the “Grand Opening”. This the ribbon-cutting event when new regions were added to the world. Tokens and prizes were often hidden in the new regions, but it seems that the audience (especially the Passives) had an insatiable hunger to see new places and things. The Grand Opening of the Popustop Arms apartment building was the most heavily attended event of the Pilot test.

Disease

One of the more successful “games” we invented for Habitat was the disease. There are three strains currently defined:

- Cooties

- Happy Face

- Mutant (AKA The Fly)

We only were able to test Cooties with live players, but it was a hit. It works like this: Several initial Avatars are infected with a “Cootie” head. This head replaces the current one, and cannot be removed except by touching another non-infected Avatar. Once infected, you can not be infected again that day. In effect, this game is “tag” and “keep away” at the same time. Often people would allow themselves be infected just so he could infect “that special person that they know would just hate it!” Every time the disease was spread, there was an announcement at least a week before, and for at least a week afterward it was the subject of major discussions. One day that the plague was spread, a female Avatar that was getting married got infected 1 hour before her wedding! Needless to say, she was very excited, and in a panic until a friend offered to take it off her hands.

Some interesting variations to try on this are: Touch 2 people to cure; this would cause quite a preponderance of infected people late in the day. The “Happy Face” plague: This simple head has the side effect of changing any talk message (word balloons) to come out as “HAVE A NICE DAY!”… can you imagine infecting some unsuspecting soul, and him saying back to you HAVE A NICE DAY! ??? ESP and mail still work normally, so the user is not without communications channels. The Mutant Plague: The head looks like the head of a giant housefly and it has the effect of changing talk text to “Bzzz zzzz zzzz”. We think these all will be great fun.

Deception & Trickery

These were fun things to do to your fellow Avatar.

My invention – Type this: “You have *mail* in your pocket.” and watch the fun as people say “That’s strange! I don’t have mail!”

Chip thought this up – Send this ESP message “ESP from: yournamehere”, then quickly send a “Hello” also. Your “Hello” ESP will be announced 3 times!

We developed a form of communication “harassment”. You can do this on almost any network. Just coordinate a few people all sending very short ESP messages to the victim. His screen will scroll faster than he can read. This was used against the social outcast mentioned in Dial H for Murder.

Geek Gods Revisited

“They Cheated!”

As the One and Only Habitat Oracle and Lead Programmer I was subject to some interesting conflicts of interest in operations. I cared intensely about the experience each user was having. I worried about bugs. When The Scam happened, I flipped my lid! “They Cheated! And they didn’t report it in a bug report!”. First indication I had was looking at the record for most tokens. Then I searched for bug reports. Then I sent Habitat mail to the two newest rich people asking them where they got all that money overnight. The reply I got was “I got it fair and square! And I am not going to tell you how.” At this point I should have realized that my role as Oracle and Programmer were at odds, and that the users were not aware of the relationship of my Avatar (the one who mail them asking about the money). A Geek God must not lose his temper. Remember, Habitat is its own little world.

Fan Mail

The greatest reward for being The System Operator is Fan Mail. When the Caretakers (who always end up finding out who the oracle really is) tell you that the world is a fun place to be because of you, it makes it all worthwhile. It does matter what you do (see The Rant). It all starts at the top of the pyramid. A bad Operator can drag the system down by not keeping track of details and promises. Trust feedback.

Ideas to be tried

Monster of the Month Club

Since we (theoretically) could remotely update the disk we considered a “Monster of the Month Club” scheme where we would download a new Avatar body style for special events. There were some images that were not put in the current implementation of the system that would be great: a car and a motorcycle. Some other interesting ideas included a giant (full screen height) foot that would hop around, animals, and floating objects.

Machivelli

This game was designed by Chip. It required no programming support. The game is about politics and secret organizations. The idea was that the Operators and Caretakers would start the game by making up two separate secret organizations whose goals were to “take over” Habitat. They could only do this by recruiting more and more new members (while still keeping the organization a secret!). Secret “handshakes” could be set up. Meetings. Recruiting drives. Of course, soon there would be gang warfare. Who knows where it might go?

A Final Word

As I close this document I find I keep remembering dozens of other stories to tell. And all of these come from my experiences with only 200 or so people! Imagine what it will be like with tens of thousands of creative minds at work! Though as of this writing Habitat is still not a released product, I still am proud of the world we created. I really expect to be meeting you soon “On The Other Side” in a world not unlike Habitat.

August 5, 2021

August 28, 2019

The Unum Pattern

Warning: absurd technical geekery ahead — even compared to the kinds of things I normally talk about. Seriously. But things will be back to my normal level of still-pretty-geeky-but-basically-approachable soon enough.

[Historical note: This post has been a long time in the making — the roots of the fundamental idea being described here go back to the original Habitat system (although we didn’t realize it at the time). It describes a software design pattern for distributed objects — which we call the “unum” — that I and some of my co-conspirators at various companies have used to good effect in many different systems, but which is still obscure even among the people who do the kinds of things I do. In particular, I’ve described this stuff in conversation with lots of people over the years and a few of them have published descriptions of what they understood, but their writeups haven’t, to my sensibilities at least, quite captured the idea as I conceive of it. But I’ve only got myself to blame for this as I’ve been lax in actually writing down my own understanding of the idea, for all the usual reasons one has for not getting around to doing things one should be doing.]

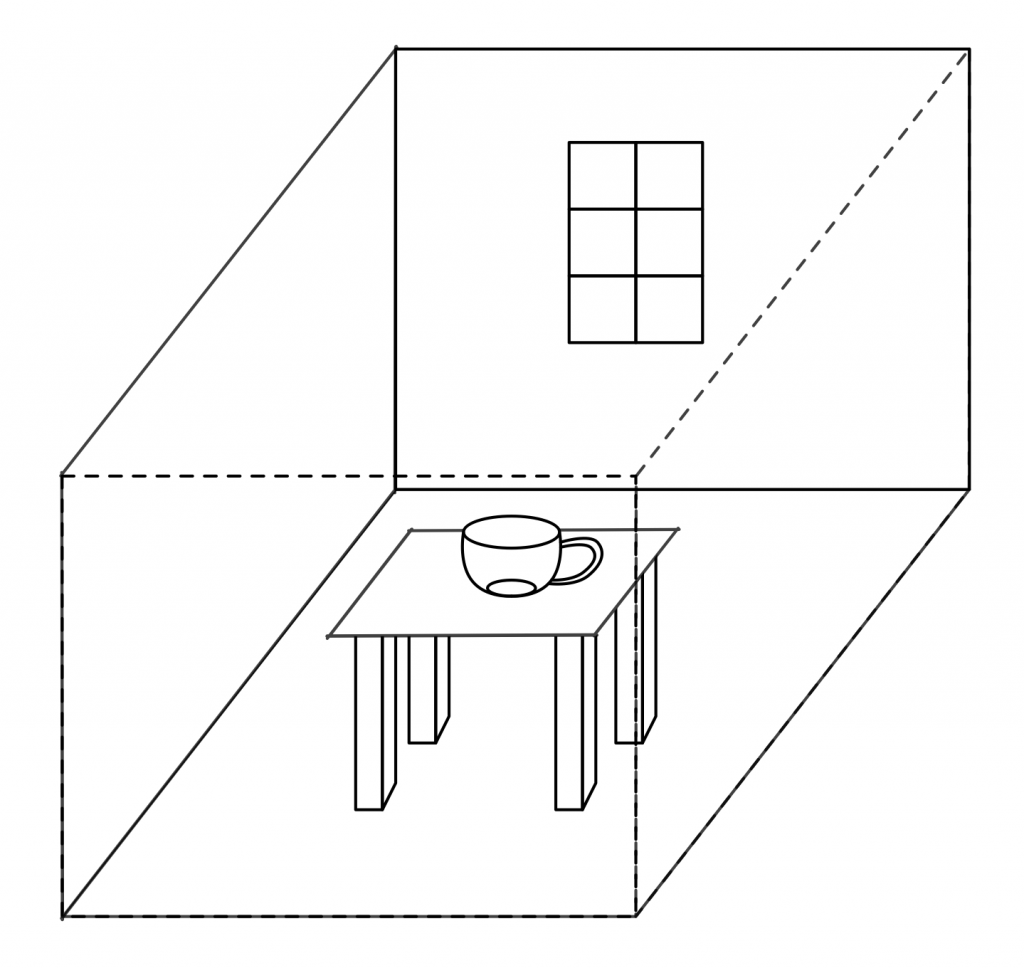

Consider a distributed, multi-participant virtual world such as Habitat or one of its myriad descendants. This world is by its nature very object oriented, but not in quite the same way that we mean when we talk about, for example, object oriented programming. This is confusing because the implementation is, itself, very object oriented, in exactly the object oriented programming sense.

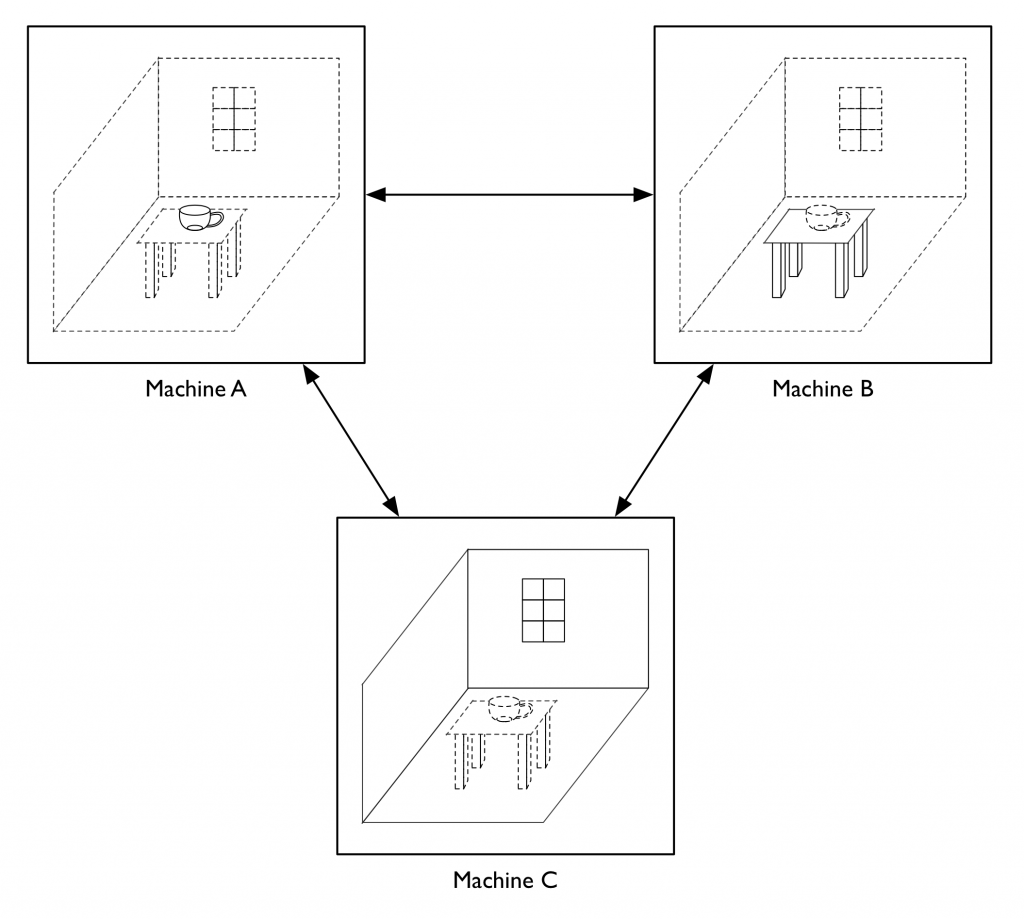

Imagine being in this virtual world somewhere, say, in a room in a building in downtown Populopolis. And there is a table in the room and sitting on the table is a teacup. Well, I said you were in the virtual world, but you’re not really in it, your avatar is in it, and you are just interacting with it through the mediation of some kind of client software running on your local computer (or perhaps these days on your phone), which is in turn communicating over a network connection to a server somewhere. So the question arises, where is the teacup, really? Certainly there is a representation of the teacup inside your local computer, but there is also a representation of the teacup inside the server. And if I am in the room with you (well, my avatar, but that’s not important right now), then there’s also a representation of the teacup inside my local computer. So is the teacup in your computer or in my computer or in the server? One reasonable answer is “all of the above”, but in my experience a lot of technical people will say that it’s “really” in the server, since they regard the server as the source of truth. But the correct answer is that the teacup is on a table in a room inside a building in Populopolis. The teacup occupies a different plane of existence from the software objects that are used to realize it. It has an objective identity of its own — if you and I each refer to it, we are talking about the same teacup — but this identity is entirely distinct from the identities of any of those software objects. And it has such an identity, because even though it’s on a different plane there still needs to be some kind of actual identifier that can be used in the communications protocols that the clients and the server use to talk to each other, so that they can refer to the teacup when they describe their manipulations of it and the things that happen to it.

You might distinguish between these two senses of “object” by using phrases with modifiers; for example, you might say “world object” versus “OOP object”, and in fact that is what we did for several years. However, this terminology made it easy to fall back on the shorthand of just talking about “objects” when it was clear from context which of these two meanings of “object” you meant. Of course, it often turned out that this context wasn’t actually clear to somebody in the conversation, with confusion and misunderstanding as the common result. So after a few false starts at crafting alternative jargon we settled on using the term “object” to always refer to an OOP object in an implementation and the term “unum”, from the latin meaning a single thing, to refer to a world object. This term has worked well for us, aside from endless debates about whether the plural is properly “una” or “unums” (my opinion is: take your pick; people will know what you mean either way).

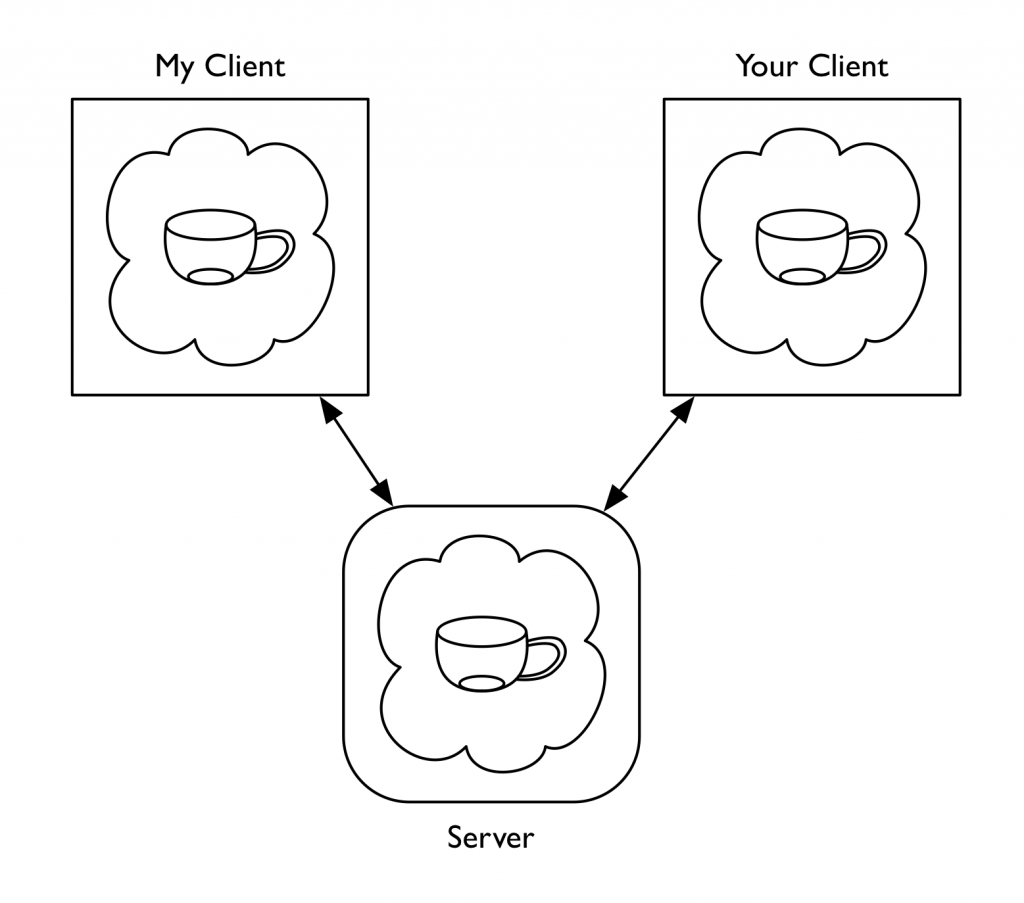

Of course, we still have to explain the relationship between the unum and its implementation. The objects (using that word from now on according to our revised terminology) that realize the unum do live at particular memory addresses in particular computers. We think of the unum, in contrast, as having a distributed existence. We speak of the portion of the unum that resides in a particular machine as a “presence”. So to go back to the example I started with, the teacup unum has a presence on the server and presences on each of our client machines.

(As an aside, for the first few years of trying to explain to people how Habitat worked, I would sometimes find myself in confused discussions about “distributed objects”, by which the people with whom I was talking meant individual objects that were located at different places on the network, whereas I meant objects that were themselves distributed entities. I didn’t at first realize these conversations were at cross purposes because the model we had developed for Habitat seemed natural and obvious to me at the time — how else could it possibly work, after all? — and it took me a while to twig to the fact that other people conceived of things in a very different way. Another reason for introducing a new word.)

In the teacup example, we have a server presence and some number of client presences. The client presences are concerned with presenting the unum to their local users while the server presence is concerned with keeping track of that portion of the unum’s state which all the users share. Phrased this way, many people find the presence abstraction very natural, but it sometimes leads them to jump to conclusions about what is going on, resulting in still more confusion and conversation at cross purposes. People who implement distributed systems often build on top of frameworks that provide services like data replication, and so it is easy to fall into thinking of the server presence as the “real” version of the unum and the client presences as shadow copies that maintain a (perhaps slightly out of date) cached representation of the true state. Or thinking of the client presences as proxies of some kind. This is not exactly wrong, in the sense that you can certainly build systems that work this way, as many distributed applications — possibly including most commercially successful MMOs — actually do. However, it’s not the model I’m describing here.

One problem with data replication based schemes is that they don’t gracefully accommodate use cases that require some information be withheld from some participants (it’s not that you absolutely can’t do this, but it’s awkward and cuts against the grain). It’s not just that the server is authoritative about shared state, but also that the server is allowed to take into account private state that the clients don’t have, in order to determine how the shared state changes over time and in response to events.

A server presence and a client presence are not doing the same job. The fundamental underlying concept that presences embody is not some notion of master vs. replica, but division of labor. Each has distinct responsibilities in the joint work of being the unum. Each is authoritative about different aspects of the unum’s existence (and typically each will maintain private state of their own that they do not share with the other). In the case of the client-server model in our example, the client presence manages client-side concerns such as the state of the display. It worries about things like 3D rendering, animation sequencing, and presenting controls to the human user to manipulate the teacup with. The server keeps track of things like the physical model of the teacup within the virtual world. It worries about the interactions between the teacup and the table, for example. Each presence knows things that are none of the other presence’s business, either because that information is simply outside the scope of what the other presence does (such as the current animation frame or the force being applied to the table) or because it’s something the other presence is not supposed to know (such as the server knowing that this particular teacup has a hidden flaw that will cause it to break into several pieces if you pour hot water into it, revealing a secret message inscribed on the edges where it comes apart). The various different client presences may also have information they do not share with each other for reasons of function or privacy. For example, one client might do 3D rendering in a GUI window while another presents only a textual description with a command line interface. Perhaps the server has revealed the secret message hidden in the teacup to my client (and to none of the others) because I possess a magic amulet that lets me see such things.

We can loosely talk about “sending a message to an unum”, but the sending of messages is an OOP concept rather than a world model concept. Sending a message to an unum (which is not an object) is really sending a message to some presence of that unum (since a presence is an object). This means that to designate the target of such a message, the address needs two components: (1) the identity of the unum and (2) an indicator of which presences of that unum you want to talk to.

In the systems I’ve implemented (including Habitat, but also, perhaps more usefully for anyone who wants to play with these ideas, its Nth generation descendant, the Elko server framework), the objects on a machine within a given application all run inside a common execution environment — what we now call a “vat”. Cross-machine messages are transported over communications channels established between vats. In such a system, from a vat’s perspective the external presences of a given unum (that is, presences other than the local one) are thus in one-to-one correspondence with the message channels to the other vats that host those presences, so you can designate a presence by indicating the channel that leads to its vat. (For those presences you can talk to, anyway: the unum model does not require that a presence be able to directly communicate with all the other presences. For example, in the case of a Habitat or Elko-style system such as I am describing here, clients don’t talk to other clients, but only to the server.)

Here we encounter an asymmetry between client and server that is another frequent source of confusion. From the client’s perspective, there is only one open message channel — the one that talks to the server — and so the only other unum presence a client knows about is the server presence. In this situation, the identifier of the unum is sufficient to determine where a message should be sent, since there is only one possibility. Developers working on client-side code don’t have to distinguish between “send a message to the unum” and “send a message to the server presence of the unum”. Consequently, they can program to the conventional model of “send messages to objects on the other end of the connection” and everything works more or less the way they are used to. On the server side, however, things get more interesting. Here we encounter something that people accustomed to developing in the web world have usually never experienced: server code that is simultaneously in communication with multiple clients. This is where working with the unum pattern suddenly becomes very different, and also where it acquires much of its power and usefulness.

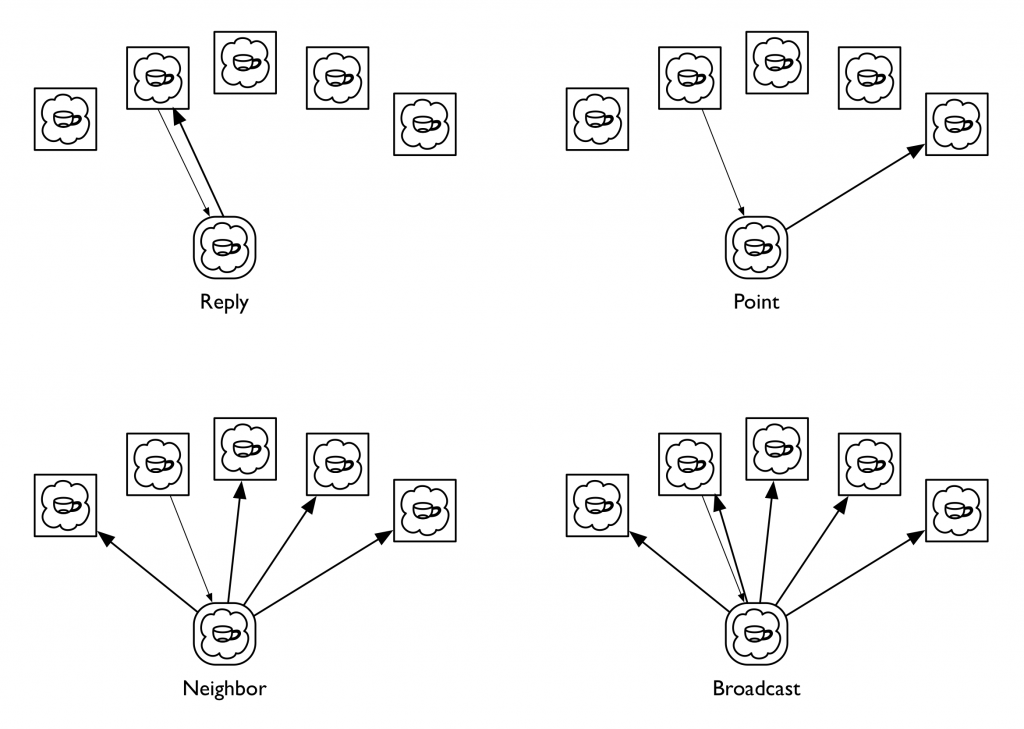

In the client-server unum model, the server can communicate with all of an unum’s client presences. Although a given message could be sent to any of them, or to all of them, or to any arbitrary subset of them, in practice we’ve found that a small number of messaging patterns suffice to capture everything we’ve wanted to do. More specifically, there are four patterns that in our experience are repeatedly useful, to the point where we’ve codified these in the messaging libraries we use to implement distributed applications. We call these four messaging patterns Reply, Neighbor, Broadcast, and Point, all framed in the context of processing some message that has been received by the server presence from one of the clients; among other things, this context identifies which client it was who sent it. A Reply message is directed back to the client presence that sent the message the server is processing. A Point message is directed to a specific client presence chosen by the server; this is similar to a Reply message except that the recipient is explicit rather than implied and could be any client regardless of context. A Broadcast message is sent to all the client presences, while a Neighbor message is directed to all the client presences except the sender of the message that the server is processing. The latter pattern is the one that people coming to the unum model for the first time tend to find weird; I’ll explain its use in a moment.

(Some people jump to the idea these four are all generalizations of the Point message, thinking it a good primitive to actually implement the other three, but in the systems we’ve built the messaging primitive is a lower level construct that handles fanout and routing for one or many recipients with a single, common mechanism so that we don’t have to multiply buffer the message if it has more than one target. In practice, we use Point messages rather rarely; in fact, using a Point message usually indicates that you’re doing something odd.)

The reason for there being multiple client presences in the first place is that the presences all share a common context in which the actions of one client can affect the others. This is in contrast to the classic web model in which each client is engaged in its own one-on-one dialog with the server, pretty much unrelated to any simultaneous dialogs the server might be having with other clients that just happen to be connected to it at the same time. However, the multiple-clients-in-a-shared-context model is a very good match for the kinds of online game and virtual world applications for which it was originated (it’s not that you can’t realize those kinds of applications using the web model, but, like the comment I made above about data replication, it’s cutting against the grain — it’s not a natural thing for web servers to do).

Actions initiated by a client typically take the form of a request message from that client to an unum’s server presence. The server’s handler for this message takes whatever actions are appropriate, then sends a Reply message back informing the requestor of the results of the action, along with a Neighbor message to the other client presences informing them of what just happened. The Reply and Neighbor messages generally have different payloads since the requestor typically already knows what’s going on and often merely needs a status result, whereas the other clients need to be informed of the action de novo. It is also common for the requestor to be a client that is in some privileged role with respect to the unum (perhaps the sending client is associated with the unum’s owner or holder, for example), and thus entitled to be given additional information in the Reply that is not shared with the other clients.

Actions initiated by the server, on the other hand, typically will be communicated to all the clients using the Broadcast pattern, since in this case none of the clients start out knowing what’s going on and thus all require the same information. The fact that the server can autonomously initiate actions is another difference between these kinds of systems and traditional web applications (server initiated actions are now supported by HTTP/2, albeit in a strange, inside out kind of way, but as far as I can tell they have yet to become part of the typical web application developer’s toolkit).

A direction that some people immediately want to go is to attempt to reduce the variety of messaging patterns by treating the coordination among presences as a data replication problem, which I’ve already said is not what we’re doing here. At the heart of this idea is a sense that you might make the development of presences simpler by reducing the differences between them — that rather than developing a client presence and a server presence as separate pieces of code, you could have a single implementation that will serve both ends of the connection (I can’t count the number of times I’ve seen game companies try to turn single player games into multiplayer games this way, and the results are usually pretty awful). Alternatively, one could implement one end and have the other be some kind of standardized one-side-fits-all thing that has no type-specific logic of its own. One issue with either of these approaches is how you handle the asymmetric information patterns inherent in the world model, but another is the division of labor itself. Systems built on the unum pattern tend to have message interfaces that are fundamentally about behavior rather than about data. That is, what is important about an unum is what it does. Habitat’s design was driven to a very large degree by the need for it to work effectively over 300 and 1200 baud connections. Behavioral protocols are vastly more effective at economizing on bandwidth than data based protocols. One way to think of this is as a form of highly optimized, knowledge-based data compression: if you already know what the possible actions are that can transform the state of something, a parameterized operation can often be represented much more compactly than can all state that is changed as a consequence of the action’s execution. In some sense, the unum pattern is about as anti-REST as you can be.

One idea that I think merits a lot more exploration is this: given the fundamental idea that an unum’s presences are factored according to a division of labor, are there other divisions of labor besides client-server that might be useful? I have a strong intuition that the answer is yes, but I don’t as yet have a lot of justification for that intuition. One obvious pattern to look into is a pure peer-to-peer model, where all presences are equally authoritative and the “true” state of reality is determined by some kind of distributed consensus mechanism. This is a notion we tinkered with a little bit at Electric Communities, but not to any particular conclusion. For the moment, this remains a research question.

One of the things we did do at Electric Communities was build a system where the client-server distinction was on a per-unum basis, rather than “client” and “server” being roles assigned to the two ends of a network connection. To return to our example of a teacup on a table in a room, you might have the server presence of the teacup be on machine A, with machines B and C acting as clients, while machine B is the server for the table and machine C is the server for the room. Obviously, this can only happen if there is N-way connectivity among all the participants, in contrast to the traditional two-way connectivity we use in the web, though whether this is realized via pairwise connections to a central routing hub or as a true crossbar is left as an implementation detail. This kind of per-unum relationship typing was one of the keys to our strategy for making our framework support a world that was both decentralized and openly extensible. (Continuing with the question raised in the last paragraph, an obvious generalization would be to allow the division of labor scheme itself vary from one unum to another. This suggests that a system whose unums are all initially structured according to the client-server model could still potentially act as a test bed for different schemes for dividing up functionality over the network.)

Having the locus of authoritativeness with respect to shared state vary from one unum to another opens up lots of interesting questions about the semantics of inter-unum relationships. In particular, there is a fairly broad set of issues that at Electric Communities we came to refer to as “the containership problem”, concerning how to model one unum containing another when the una are hosted on separate machines, and especially how to deal with changes in the containership relation. For example, let’s say we want to take our teacup that’s sitting on the table and put it into a box that’s on the table next to it. Is that an operation on the teacup or on the box? If we have the teacup be authoritative about what its container is, it could conceivably teleport itself from one place to another, or insert itself into places it doesn’t belong. On the other hand, if we have the box be authoritative about what it contains, then it could claim to contain (or not contain) anything it decides it wants. Obviously there needs to be some kind of handshake between the two (or between the three, if what we’re doing is moving an unum from one container to another, since both containers may have an interest — or among the two or three and whatever entity is initiating the change of containership, since that entity too may have something to say about things), but what form that handshake takes leads to a research program probably worthy of being somebody’s PhD thesis project.

Setting aside some of these more exotic possibilities for a moment, we have found the unum pattern to be a powerful and effective tool for implementing virtual worlds and lots of other applications that have some kind of world-like flavor, which, once you start looking at things through a world builder’s lens, is a fairly diverse lot, including smart contracts, multi-party negotiations, auctions, chat systems, presentation and conferencing systems, and, of course, all kinds of multiplayer games. And if you dig into some of the weirder things that we never had the chance to get into in too much depth, I think you have a rich universe of possibilities that is still ripe for exploration.

May 1, 2019

Another Thing Found While Packing to Move

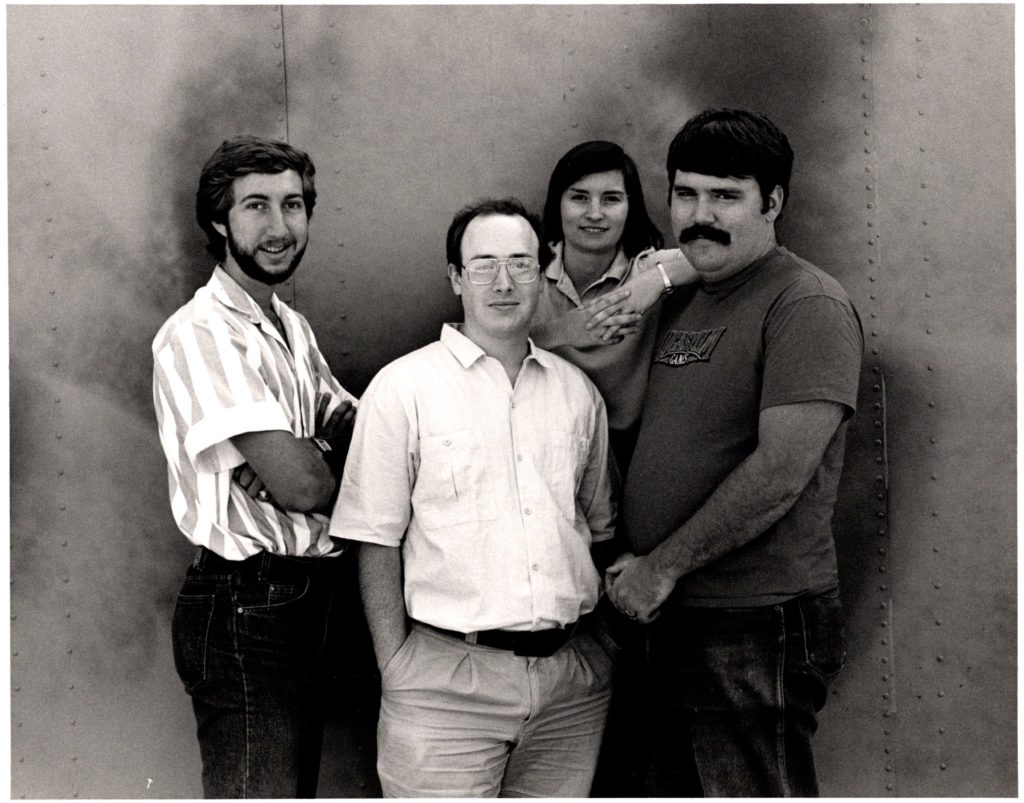

Getting ready to move has turned up all kinds of lost treasures. Here’s a publicity photo of the original Habitat programming team, taken next to a storage shed at Skywalker Ranch in 1987:

There were a couple of other developers who coded bits and pieces, but these four are the ones who lived and breathed the project full time for almost three years.

I particularly like this picture because it’s the only one I have that includes Janet Hunter. Janet was the main Habitat developer at QuantumLink. I think we shot this during one of Janet’s rare visits out west, since she was based in the Washington DC area where QuantumLink was. She wrote most of the non-game-specific parts of the original Habitat server and set the architectural pattern for nearly all the servers I’ve implemented since then.

It’s hard to believe I was ever that young, that thin, or had that much hair.

March 9, 2019

A Lost Treasure of Xanadu

Some years ago I found the cover sheet to a lost Xanadu architecture document, which I turned into this blog post for your amusement. Several people commented to me at the time that they wished they could see the whole document it was attached to. Alas, it appeared to have vanished forever.

Last weekend I found it! I turned up a copy of the complete document while sorting through old crap in preparation for having to move in the next few months. Now that I’ve found it I’m putting it online so it can get hoovered up by the internet hive mind. This is the paradox of the internet — nothing is permanent and nothing ever goes away.

This is a document I wrote in early 1984 at the behest of the System Development Foundation as part of Xanadu’s quest for funding. It is a detailed explanation of the Xanadu architecture, its core data structures, and the theory that underlies those data structures, along with a (really quite laughable) project plan for completing the system.

At the time, we regarded all the internal details of how Xanadu worked as deep and dark trade secrets, mostly because in that pre- open source era we were stupid about intellectual property. As a consequence of this foolish secretive stance, it was never widely circulated and subsequently disappeared into the archives, apparently lost for all time. Until today!

What I found was a bound printout, which I’ve scanned and OCR’d. The quality of the OCR is not 100% wonderful, but as far as I know no vestige of the original electronic form remains, so this is what we’ve got. I’ve applied minimal editing, aside from removing a section containing personal information about several of the people in the project, in the interest of those folks’ privacy.

Anyone so inclined is quite welcome, indeed encouraged, to attempt a better conversion to a more suitable format. I’d do that myself but I really don’t have the time at the moment.

This should be of interest to anyone who is curious about the history of Project Xanadu or its technical particulars. I’m not sure where the data structures rank given the subsequent 35 or so years of advance in computer science, but I think it’s still possible there’s some genuinely groundbreaking stuff in there.

February 7, 2017

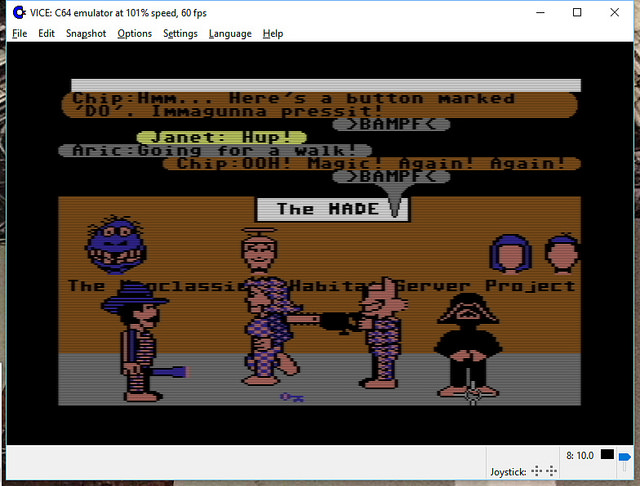

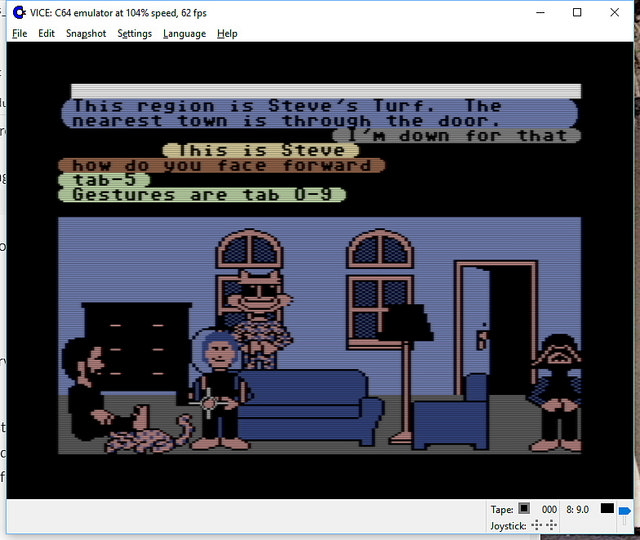

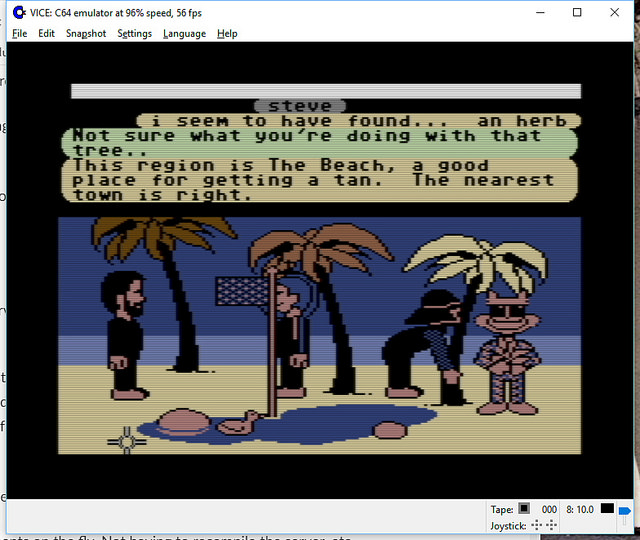

Open Source Lucasfilm’s Habitat Restoration Underway

It’s all open source!

Yes – if you haven’t heard, we’ve got the core of the first graphical MMO/VW up and running and the project needs help with code, tools, doc, and world restoration.

I’m leading the effort, with Chip leading the underlying modern server: the Elko project – the Nth generation gaming server, still implementing the basic object model from the original game.

http://neohabitat.org is the root of it all.

http://slack.neohabitat.org to join the project team Slack.

http://github.com/frandallfarmer/neohabitat to fork the repo.

To contribute, you should be capable to use a shell, fork a repo, build it, and run it. Current developers use: shell, Eclipse, Vagrant, or Docker.

To get access to the demo server (not at all bullet proofed) join the project.

We’ve had people from around the world in there already! (See the photos)

http://neohabitat.org #opensource #c64 #themade

October 19, 2014

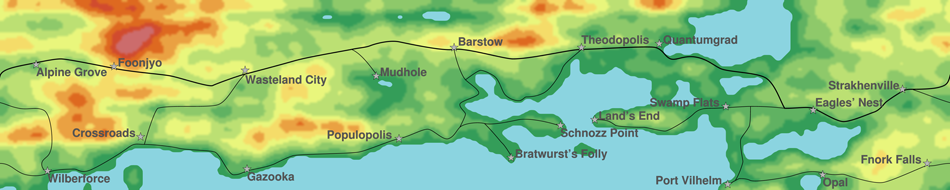

Map of The Habitat World

By now a lot of you may have heard about the initiative at Oakland’s Museum of Digital Arts & Entertainment to resurrect Habitat on the web using C64 emulators and vintage server hardware. If not, you can read more about it here (there’s also been a modest bit of coverage in the game press, for example at Wired, Joystiq, and Gamasutra).

Part of this effort has had me digging through my archives, looking at old source files to answer questions that people had and to refresh my own memory of how things worked. It’s been pretty nostalgic, actually. One of the cooler things I stumbled across was the Habitat world map, something which relatively few people have ever seen because when Habitat was finally released to the public it got rebranded (as “Club Caribe”) with an entirely different set of publicity materials. I had a big printout of this decorating my office at Skywalker Ranch and later at American Information Exchange, but not very many people will have been in either of those places. Now, however, thanks to the web, I can share it publicly for the first time.

We wanted to have a map because we thought we would need a plan for enlarging the world as the user population grew. The idea was to have a framework into which we could plug new population centers and new places for stories and adventures.

The specific map we ended up with came about because I was playing around writing code to generate plausible topographic surfaces using fractal techniques (and, of course, lots and lots and LOTS of random numbers). The little program I wrote to do this was quite a CPU hog, but I could run it on a bunch of different computers in parallel and combine the results (sort of like modern MapReduce techniques, only by hand!). One night I grabbed every Unix machine on the Lucasfilm network that I could lay my hands on (two or three Vax minicomputers and six or eight Sun workstations) and let the thing cook for an epic all-nighter of virtual die rolling. In the morning I was left with this awesome height field, in the form of a file containing a big matrix of altitude numbers. Then, of course, the question was what to do with it, and in particular, how to look at it. Remember that in those days, computers didn’t have much in the way of image display capability; everything was either low resolution or low color fidelity or both (the Pixar graphics guys had some high end display hardware, but I didn’t have access to it and anyway I’d have to write more code to do something with the file I had, which wasn’t in any kind of standard image format). Then I realized that we had these new Apple LaserWriter printers. Although they were 1-bit per pixel monochrome devices, they printed at 300 DPI, which meant you could get away with dithering for grayscale. And you fed stuff to them using PostScript, a newfangled Forth-like programming language. So I ordered Adobe’s book on PostScript and went to work.

I wrote a little C program that took my big height field and reduced it to a 500×100 image at 4 bits per pixel, and converted this to a file full of ASCII hexadecimal values. I then wrapped this in a little bit of custom PostScript that would interpret the hex dump as an image and print it, and voilá, out of the printer comes a lovely grayscale topographic map. Another little quick filter and I clipped all the topography below a reasonable altitude to establish “sea level”, and I had some pretty sweet looking landscape. At this point, you could make out a bunch of obvious geographic features, so we picked locations for cities, and drew some lines for roads between them, and suddenly it was a world. A little bit more PostScript hacking and I was able to actually draw nicely rendered roads and city labels directly on the map. Then I blew it up to a much larger size and printed it over several pages which I trimmed and taped together to yield a six and a half foot wide map suitable for posting on the wall.

As I was going through my archives in conjunction with the project to reboot Habitat, I encountered the original PostScript source for the map. I ran it through GhostScript and rendered it into a 22,800×4,560 pixel TIFF image which I could open in Photoshop and wallow around in. This immediately tempted me to do a bit more embellishment with Photoshop, so a little bit more hacking on the PostScript and I could split the various components of the image (the topographic relief, the roads, the city labels, etc.) into separate images which could then be individually manipulated as layers. I colorized the topography, put it through a Gaussian blur to reduce the chunkiness, and did a few other little bits of cosmetic tweaking, and the result is the image you see here (clicking on the picture will take you to a much larger version):

(Also, if you care to fiddle with this in other formats, the PostScript for the raw map can be gotten here. Beware that depending on what kind of configuration your browser has, your browser may just attempt to render the PostScript, which might not have exactly the results you want or expect. Have fun.)

There a number of interesting details here worth mentioning. Note that the Habitat world is cylindrical. This lets us encompass several different interesting storytelling possibilities: Going around the cylinder lets you circumnavigate the world; obviously, the first avatar to do this would be famous. The top edge is bounded by a wall, the bottom edge by a cliff. This means that you can fall of the edge of the world, or explore the wall for mysterious openings. By the way, the top edge is West. Habitat compasses point towards the West Pole, which was endlessly confusing for nearly everyone.

We had all kinds of plans for what to do with this, which obviously we never had a chance to follow through on. One of my favorites was the notion that if you walked along the top (west) wall enough, eventually you’d find a door, and if you went through this door you’d find yourself in a control room of some kind, with all kinds of control panels and switches and whatnot. What these switches would do would not be obvious, but in fact they’d control things like the lights and the day/night cycle in different parts of the world, the color palette in various places, the prices of things, etc. Also, each of the cities had a little backstory that explained its name and what kinds of things you might expect to find there. If I run across that document I’ll post it here too.

April 29, 2014

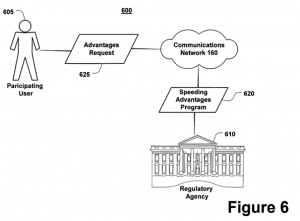

Troll Indulgences: Virtual Goods Patent Gutted [7,076,445]

Another terrible virtual currency/goods patent has been rightfully destroyed – this time in an unusual (but worthy) way: From Law360: EA, Zynga Beat Gametek Video Game Purchases Patent Suit, By Michael Lipkin

Another terrible virtual currency/goods patent has been rightfully destroyed – this time in an unusual (but worthy) way: From Law360: EA, Zynga Beat Gametek Video Game Purchases Patent Suit, By Michael Lipkin

Law360, Los Angeles (April 25, 2014, 7:20 PM ET) — A California federal judge on Friday sided with Electronic Arts Inc., Zynga Inc. and two other video game companies, agreeing to toss a series of Gametek LLC suits accusing them of infringing its patent on in-game purchases because the patent covers an abstract idea. … “Despite the presumption that every issued patent is valid, this appears to be the rare case in which the defendants have met their burden at the pleadings stage to show by clear and convincing evidence that the ’445 patent claims an unpatentable abstract idea,” the opinion said.

The very first thing I thought when I saw this patent was: “Indulgences! They’re suing for Indulgences? The prior art goes back centuries!” It wasn’t much of a stretch, given the text of the patent contains this little fragment (which refers to the image at the head of this post):

Alternatively, in an illustrative non-computing application of the present invention, organizations or institutions may elect to offer and monetize non-computing environment features and/or elements (e.g. pay for the right to drive above the speed limit) by charging participating users fees for these environment features and/or elements.

WTF? Looks like reasoning something along those lines was used to nuke this stinker out of existence. It is quite unusual for a patent to be tossed out in court. Usually the invalidation process has to take a separate track, as it has with other cases I’ve helped with, such as The Word Balloon Patent. I’m very glad to see this happen – not just for the defendant, but for the industry as a whole. Just adding “on a computer [network]” to existing abstract processes doesn’t make them intellectual property! Hopefully this precedent will help kill other bad cases in the pipeline already…

December 19, 2013

Audio version of classic “BlockChat” post is up!

On the Social Media Clarity Podcast, we’re trying a new rotational format for episodes: “Stories from the Vault” – and the inaugural tale is a reading of the May 2007 post The Untold History of Toontown’s SpeedChat (or BlockChattm from Disney finally arrives)

Link to podcast episode page[sc_embed_player fileurl=”http://traffic.libsyn.com/socialmediaclarity/138068-disney-s-hercworld-toontown-and-blockchat-tm-s01e08.mp3″]

August 2, 2013

Armed and Dangerous

[This is a repost from my long-dead Yahoo 360 blog, originally posted August 2006 about events in spring 2002. I decided to recover this posting from the Internet Archive because recent events, 12 years after 9/11, show that the authorities are STILL over-panicking about our security.]

How could I know that singing “Man of Constant Sorrow” in public could be considered a terrorist weapon?

One early spring evening in 2002 I went for a walk in my neighborhood wearing my FDNY September 11th Memorial T-Shirt (shown above), telling my family that I would return just after sundown (about 30 minutes).

About an hour and a half later I arrived at home teasing them by explaining that I’d “ just been handcuffed, interrogated, searched, had a machine gun pointed directly at me, been ordered to my knees two feet from a K-9 gnashing it’s teeth, and was nearly arrested as a terrorist … all just for singing out loud.”

My family didn’t believe me at first – until I showed them the reddened cuff marks on my wrists and the business card of PAPD Sergeant, Sandra Brown.

Now they wanted to hear the whole story…

One mild spring evening in 2002, I felt like singing. I wanted to teach myself some bluegrass and spirituals that I’d discovered recently (mostly as the result of recently seeing O Brother Where Art Thou?) and I felt like being real loud. So, rather than disturb by family, I decided to go for a walk and practice elsewhere. Given the weather, I’d only need a tshirt and jeans to keep me warm until well past sundown. I started singing right away when I got outside, but then noticed some of my neighbors, so I thought that it’d be better if I could find a place to belt out my baritone/bass tones where no one would care if I were in tune. I was practicing, after all.

“The pedestrian walkway over 101 would be perfect”, I thought, “with any luck I’ll be completely drowned out.”

I’d made good time hiking to the pedestrian overpass, humming “Ahhhh am a maaaaan, of con-stant sah-roooow…” along the way. By the time I reached the apex of the passage, the sun was very low in the west dropping just below the hills. The gold-purple sky was an inspirational sight. The constant breeze from the cars whizzing by below was quite effective in carrying my voice away, so I cranked up the volume. I was having a great time and expanded my material to include my favorite Webber show tunes. Other than a pair of guys walking by, my only audience was the late evening commuters most of who had just turned on their headlights. It was a blast. For 15 minutes I was able to belt out anything I wanted, as loud as I could.

When I was starting to feel the effects of singing continuously that loudly the sun had completely set, so I decided to head home. I was running a little later than I’d expected, so I increased my gait a just bit.

As my stride increased (mostly due to gravity) on my way down the sloped ramp back into the neighborhood, directly in front of me appeared two Palo Alto police officers who had just started their way up the ramp. Just a moment after I noticed them, they noticed me, and then did something very, very, strange. They quickly walked backward away from me until they were out of sight, around the corner, at the base of the ramp. I’d never seen anyone do anything like that before. How on earth could I intimidate two police officers just by walking down a pedestrian ramp? As I proceeded down to the exit I called out loud: “HELLO? Is everything alright?”

As I came to the bottom and walked around the corner there were about a half dozen of Palo Alto’s finest, one with what looked like an M-16 and others with pistols pointed directly at me. There was much yelling and I see and hear a dog barking threateningly – “Don’t move!” “Turn Around!” “Get Down!” “Put your hands where we can see them!” “Bark! Bark! [Jangling of a large dog chain.]”

I wasted no time at all, I put my hands in the air and turned my back to them. I kneeled, quickly enough that it hurt. “I think there’s been some mistake, whatever you do, please don’t let go of that dog” is all I could think to say at the moment. I had no idea what the heck was going on, but I didn’t want to give them any reason to make a horrible mistake.

“Who are you?” “Where are you from?” “What are you doing here?” “What are you carrying?” were the rapid-fire questions I can remember. I quickly explained that I was on a walk, singing songs. “The only thing I’m carrying is my wallet, which shows I live two blocks from here”, I said, still kneeling, I didn’t even have my house keys. “Take it out and toss it on the ground, but move very slowly”, said a woman who seemed to be in command of situation, She was to my left, but still behind me where I couldn’t see her. Very, very cautiously, I complied. “Do you have anything else?”, the request was rather urgent and sounded specific. “No. Nothing.”

An officer came up and handcuffed my wrists behind my back, aggressively patted me down, and helped me to my feet. My wallet was retrieved the commander-woman. Once I could face the squad again, I clearly recognized her as Sandra Brown, an officer who had done many hours as a bicycle-beat cop in the downtown Palo Alto area, where my family had spent nearly every Friday evening for nearly 14 years. I was hoping that this meant she might recognize me as well, helping to diffuse whatever this horrible mess was all about.

She walked me over to the back of her police cruiser, pressing me back on the trunk hard enough that my handcuffed wrists were pressed into the car metal enough to let me know that I wasn’t going to be going anywhere without her permission. She grabbed the walky-talky that I hadn’t previously noticed had been set on the roof of the car and spoke into it “(muffled) check in. Anything?”. I couldn’t make out the response, but the meaning was made clear to me immediately when she asked:

“Did you go all the way across the overpass?” “No.”

“Did you see anyone else up there?” “Just two guys that walked by about 20 minutes ago. Nothing unusual.”

“Where did you put it?” “Put what? I didn’t have anything.”

“Did you leave behind any clothing” “Clothing? What? No.”

Fifteen to twenty minutes passed. Officer Brown checked my ID and confirmed that I’m local. She noticed my shirt for the first time. The cuffs were starting to hurt. I’d been told to be quiet. The sturdy, but small blond woman with the assault rifle was keeping it at-the-ready, but it isn’t pointing at me. The dog had stopped barking, but was at some kind of station-keeping pose. Lots more radio traffic. I finally piece together that at least two officers were on the other side of the ramp are looking for something, something that they think I might have hidden there, something critical to this situation.

Finally, the invisible officers at the other end of the radio apparently gave up the search. My heart stopped racing. My temperature started to drop. You see, I finally stopped thinking that I’m likely to end up wounded or dead due to someone panicking.

Once the search is over, it became clear that maybe the situation was not what they had expected/feared. Officer Brown started to explain: “We got a phone call from someone on a cel-phone driving on 101 reporting a sniper, wearing a trench coat, was shooting at cars with a high-powered rifle or machine gun.” Apparently this triggered the Palo Alto equivalent of the swat team.

I couldn’t resist: “An overweight middle-aged man, singing the lead from The Phantom of the Opera (probably waving his arms about, crooning to Christine about being ‘inside her mind’), while wearing jeans and a tshirt that reads All Gave Some, Some Gave All on the back, somehow looks like a Columbine kid terrorizing the freeway with an automatic weapon? What irony: Wear a public-safety-supporting tshirt, get suspected of being a sniper.”. This observation did get a bit of a giggle out of the one with the real Tommy gun, finally hanging peacefully at her side.

I was feeling a little put out: “One call with such a vague description gets this level of response? Did 9/11 really turn us all into people looking for a terrorist behind every darkened corner? A trench coat? This is pretty unbelievable.” I was starting to get very sore about my wrist pain. “We’re sorry, we need to be extra cautious in situations such as these, if it had turned out to be true… In any case, you’ll have a great story to tell your kids and grandkids.”